Introduction: This article will introduce the technical details of Function Compute Serverless Task for task-triggered deduplication, and how to deal with it in scenarios where the accuracy of task execution is strictly required.

foreword

Whether in the field of big data processing or in the field of message processing, the task system has a very critical capability - the guarantee of task-triggered deduplication. This ability is essential for some scenarios that require extremely high accuracy (such as finance, etc.). As a serverless task processing platform, Serverless Task also needs to provide this kind of guarantee, and has accurate trigger semantics of tasks at the user application level and within its own system. This article focuses on the topic of message processing reliability to introduce some technical details inside Function Compute, and show how to use the capabilities provided by Function Compute in practical applications to enhance the reliability of task execution.

Talking about task deduplication

When discussing asynchronous message processing systems, the basic semantics of message processing is an inescapable topic. In an asynchronous message processing system (task system), the processing flow of a message is simplified as shown in the following figure:

figure 1

The user sends a task - enters the queue - the task processing unit monitors and obtains the message - dispatches it to the actual worker for execution

During the entire flow of task messages, problems such as possible downtime of any component (link) will result in incorrect message delivery. A typical task system provides up to 3 levels of message processing semantics:

● At-Most-Once: Guarantees that the message is delivered at most once. When there is a network partition and system components are down, messages may be lost;

● At-Least-Once: Guarantees that the message is delivered at least once. The message transmission link supports error retry, and uses the message retransmission mechanism to ensure that the downstream must receive the upstream message. However, in the case of downtime or network partition, the same message may be transmitted multiple times.

The Exactly-Once mechanism can ensure that the message is transmitted exactly once. Exactly once does not mean that there is no retransmission in the case of downtime or network partition, but the retransmission does not change the state of the receiver, and the transmission is once the same result. In actual production, Exactly Once is often achieved by relying on the retransmission mechanism & receiver deduplication (idempotent).

Function Compute can provide the Exactly Once semantics of task distribution, that is, under any circumstances, repeated tasks will be considered to be the same trigger by the system, and then only one task distribution will be performed.

Combined with Figure 1, if the task is to be deduplicated, the system needs to provide at least two dimensions of guarantee:

1. System-side guarantee: the failover of the task scheduling system itself does not affect the correctness and uniqueness of message delivery;

2. Provide users with a mechanism that can trigger the deduplication semantics of the entire business logic.

Below, we will discuss how function computing achieves the above capabilities in combination with the simplified Serverless Task system architecture.

Implementation of Function Compute Asynchronous Task Triggered Deduplication

The task system architecture of function computing is shown in the following figure

figure 2

First, the user calls the Function Compute API to send a task (step 1) into the API-Server of the system, and the API-Server checks and transmits the message to the internal queue (step 2.1). There is an asynchronous module in the background that monitors the internal queue in real time (step 2.2), and then calls the resource management module to obtain runtime resources (steps 2.2-2.3). After obtaining the runtime resources, the scheduling module delivers the task data to the VM-level client (step 3.1), and the client forwards the task to the actual user running resources (step 3.2). In order to ensure the two dimensions mentioned above, we need support at the following levels:

1. System-side guarantee: In steps 2.1 - 3.1, the Failover of any intermediate process can only trigger the execution of step 3.2 once, that is, it will only schedule the operation of the user instance once;

2. User-side application-level deduplication capability: It can support the user to repeatedly execute step 1, but actually only trigger the execution of step 3.2 once.

System-side graceful upgrade & task distribution and deduplication guarantee during Failover

When the user's message enters the Function Compute system (ie, step 2.1 is completed), the user's request will receive a Response with HTTP status code 202, and the user can consider that the task has been successfully submitted once. From the time the task message enters MQ, its life cycle is maintained by Scheduler, so the stability of Scheduler and MQ will directly affect the implementation of Exactly Once of the system.

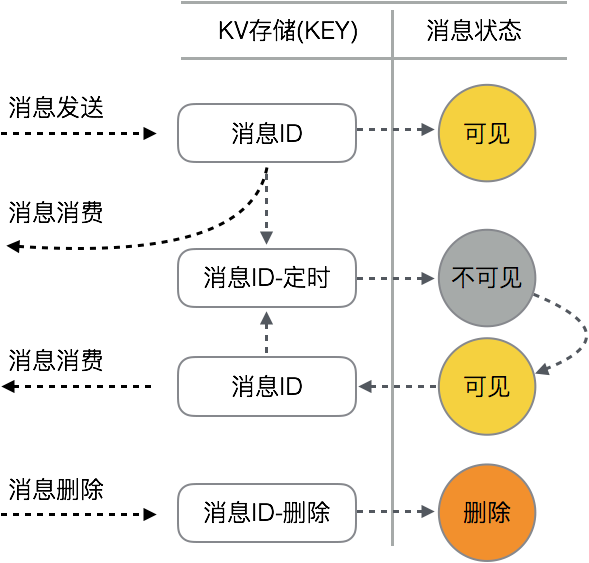

Most open source messaging systems (such as MQ and Kafka) generally provide the semantics of multiple-copy message storage and unique consumption. The message queue used by Function Compute (RockMQ at the bottom layer) is also the same. The 3-copy implementation of the underlying storage makes us not need to worry about the stability of message storage. In addition, the message queue used by Function Compute has the following characteristics:

1. The uniqueness of consumption: After each message in each queue is consumed, it will enter the "invisible mode". In this mode, other consumers cannot get the message;

2. The actual consumer of each message needs to update the invisible time of the mode in real time; when the consumer completes the consumption, the message needs to be displayed and deleted. Therefore, the entire life cycle of the message in the queue is shown in the following figure:

image 3

Scheduler is mainly responsible for message processing, and its tasks are mainly composed of the following parts:

1. Calculate the scheduling policy of the load balancing module according to the function, and monitor the queues that it is responsible for;

2. When a message appears in the queue, pull the message and maintain a state in memory: until the message consumption is completed (the user instance returns the function execution result), the visible time of the message is continuously updated to ensure that the message will not be in the queue again. Appear;

3. When the task is completed, the delete message will be displayed.

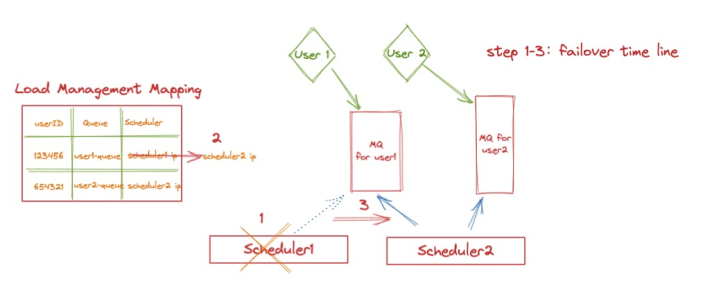

In terms of queue scheduling model, Function Compute adopts a "single-queue" management mode for ordinary users; that is, all asynchronous execution requests of each user are isolated from each other by an independent queue, which is fixed by a Scheduler. The load mapping relationship is managed by Function Compute's load balancing service, as shown in the following figure (we will introduce this part in more detail in subsequent articles):

Figure 4

When Scheduler 1 is down or upgraded, the task has two execution states:

1. If the message has not been delivered to the user's execution instance (steps 3.1 ~ 3.2 in Figure 2), then when the queue responsible for this Scheduler is picked up by other Schedulers, the message will appear again after the consumption visibility period, so Scheduler 2 will get the message again for subsequent triggering.

2. If the message has been executed (step 3.2), when the message appears again in Scheduler 2, we rely on the Agent in the user VM for state management. At this time, Scheduler 2 will send an execution request to the corresponding Agent; at this time, if the Agent finds that the message already exists in memory, it will ignore the execution request directly, and inform Scheduler 2 of the execution result through this link after execution, thereby completing Failover recovery.

User-side service-level distribution and deduplication implementation

The Function Compute system can achieve accurate consumption capabilities for each message under a single point of failure. However, if the user side repeatedly triggers function execution for the same service data, Function Compute cannot identify whether different messages are logically the same task. This often happens with network partitions. In Figure 2, if user call 1 times out, there are two possible situations:

1. The message did not reach the function computing system, and the task was not successfully submitted;

2. The message has arrived in Function Compute and joined the queue, and the task is submitted successfully, but the user cannot know the information of the successful submission due to the timeout.

In most cases the user will retry this commit. In case 2, the same task will be submitted and executed multiple times. Therefore, function computing needs to provide a mechanism to ensure the accuracy of business in this scenario.

Function Compute provides a task concept called TaskID (StatefulAsyncInvocationID). This ID is globally unique. Users can specify such an ID each time they submit a task. When a request timeout occurs, the user can retry an unlimited number of times. All repeated retries will be checked on the function compute side. Function Compute internally uses DB to store task Meta data; when the same ID enters the system, the request will be rejected and a 400 error will be returned. At this point, the client can know the submission status of the task.

Taking the Go SDK as an example in actual use, you can edit the code that triggers the task as follows:

import fc "github.com/aliyun/fc-go-sdk"

func SubmitJob() {

invokeInput := fc.NewInvokeFunctionInput("ServiceName", "FunctionName")

invokeInput = invokeInput.WithAsyncInvocation().WithStatefulAsyncInvocationID("TaskUUID")

invokeOutput, err := fcClient.InvokeFunction(invokeInput)

...

}A unique assignment was submitted.

Summarize

This article introduces the technical details of the task-triggered deduplication of the Function Compute Serverless Task, so as to support scenarios that have strict requirements on the accuracy of task execution. After using Serverless Task, you don't need to worry about the Failover of any system components, each time you submit a task will be executed exactly once. In order to support the distribution and deduplication of business-side semantics, you can set the global unique ID of the task when submitting the task, and use the capabilities provided by Function Compute to help you deduplicate the task.

Copyright statement: The content of this article is contributed by Alibaba Cloud's real-name registered users. The copyright belongs to the original author. The Alibaba Cloud developer community does not own the copyright and does not assume the corresponding legal responsibility. For specific rules, please refer to the "Alibaba Cloud Developer Community User Service Agreement" and "Alibaba Cloud Developer Community Intellectual Property Protection Guidelines". If you find any content suspected of plagiarism in this community, fill out the infringement complaint form to report it. Once verified, this community will delete the allegedly infringing content immediately.

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。