Data Analysis in Asynchronous Task Processing System

Data processing, machine learning training, and data statistical analysis are the most common types of offline tasks. Such tasks are often sent to the task platform for batch training and analysis after a series of preprocessing. In terms of processing languages, Python has become one of the most commonly used languages in the data field due to the rich data processing libraries it provides. Function Compute natively supports Python runtime, and supports the quick introduction of third-party libraries, making it extremely convenient to use Function Compute to process asynchronous tasks.

Common demands of data analysis scenarios

Data analysis scenarios often have the characteristics of long execution time and large concurrency. In offline scenarios, a batch of large amounts of data is often triggered for centralized processing. Due to this triggering feature, business parties often have higher requirements on resource utilization (cost), and expect to reduce costs as much as possible while meeting efficiency. The specific summary is as follows:

- Program development is convenient, friendly to third-party packages and custom dependencies;

- Long-running is supported. Can view the task status during execution, or log in to the machine to operate. Support to manually stop the task if there is a data error;

- High resource utilization and optimal cost.

The above requirements are great for using function computations for asynchronous tasks.

Typical Case - Database Autonomous Service

Basic business situation

The database inspection platform within Alibaba Cloud Group is mainly used to optimize and analyze slow queries and logs of SQL statements. The tasks of the entire platform are divided into two main tasks: offline training and online analysis. The computing scale of online analysis business reaches tens of thousands of cores, and the daily execution time of offline business is also tens of thousands of core hours. Due to the uncertainty of online analysis and offline training time, it is difficult to improve the overall resource utilization of the cluster, and it requires great elastic computing power support when business peaks come. After using function computing, the architecture diagram of the entire business is as follows:

Business Pain Points and Architecture Evolution

The database inspection platform is responsible for the database SQL optimization and analysis of each Region of Alibaba's entire network. Mysql data comes from each cluster in each Region, and is pre-aggregated and stored in the Region dimension. During analysis, due to the need for cross-region aggregation and statistics, the inspection platform first tries to build a large Flink cluster on the intranet for statistical analysis. However, in actual use, the following problems were encountered:

- Data processing algorithms are iteratively cumbersome. It is mainly reflected in the deployment, testing and release of the algorithm. Flink's Runtime capability greatly limits the release cycle;

- For common and some custom third-party libraries, Flink support is not very good. Some machine learning and statistical libraries that the algorithm depends on are either not available in Flink's official Python runtime, or the version is old, inconvenient to use, and unable to meet the requirements;

- The Flink forwarding link is long, and it is difficult for Flink to troubleshoot the problem;

- It is difficult to meet the requirements of elastic speed and resources at peak time. And the overall cost is very high.

After learning about function computing, the migration of algorithm tasks was carried out for the Flink computing part, and the core training and statistical algorithms were migrated to function computing. By using the related capabilities provided by function computing asynchronous tasks, the entire development, operation and maintenance and costs have been greatly improved.

The effect of migrating the function computing architecture

- After the migration function is calculated, the system can fully undertake peak traffic and quickly complete daily analysis and training tasks;

- The rich runtime capabilities of function computing support the rapid iteration of business;

- The computational cost of the same number of cores becomes 1/3 of the original Flink.

Function Compute asynchronous tasks are well suited for such data processing tasks. Function Compute can reduce the cost of computing resources while freeing you from complicated platform operation and maintenance work, and focus on algorithm development and optimization.

Best Practices for Function Compute Asynchronous Tasks - Kafka ETL

ETL is a relatively common task in data processing. The original data either exists in Kafka or exists in DB, because the business needs to process the data and then dump it to other storage media (or store it back to the original task queue). This type of business is also an obvious task scenario. If you use middleware services on the cloud (such as Kafka on the cloud), you can use the powerful trigger integration ecosystem of Function Compute to easily integrate Kafka without paying attention to the deployment of Kafka Connector, error handling, etc. operation.

Requirements for ETL Task Scenarios

An ETL task often includes three parts: source, sink and processing unit. Therefore, in addition to the requirements for computing power, the ETL task also requires the task system to have a strong upstream and downstream connection ecology. In addition, due to the accuracy requirements of data processing, the task processing system needs to be able to provide the operational semantics of task deduplication and Exactly Once. And, for processing failed messages, the ability to be able to compensate (such as retries, dead letter queues) is required. Summarized as follows:

Accurate execution of tasks:

- Task repeat triggering supports deduplication;

- Task support compensation, dead letter queue;

The upstream and downstream of the task:

- It can easily pull data and transfer the data to other systems after processing;

Operator capability requirements:

It supports the ability of user-defined operators and can flexibly perform various data processing tasks.

Serverless Task support for ETL tasks

The Destinationg function supported by Function Compute can well support the related demands of ETL tasks for convenient connection between upstream and downstream and accurate task execution. The rich Runtime support of Function Compute also makes it extremely flexible for data processing tasks. In the Kafka ETL task processing scenario, the serverless task capabilities we mainly use are as follows:

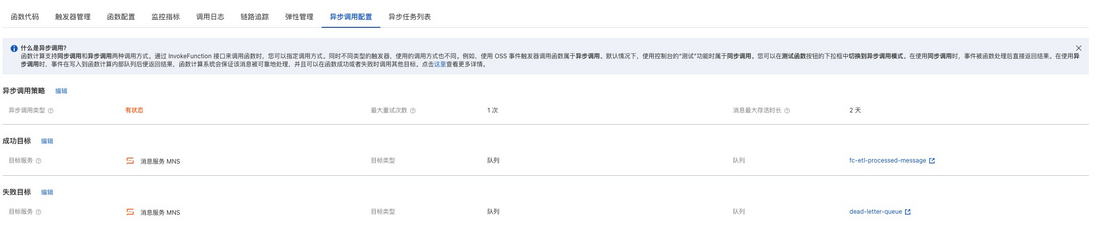

Asynchronous target configuration function:

- By configuring the task success target, it supports automatic delivery of tasks to downstream systems (such as queues);

- By configuring the task failure target, support the dead letter queue capability, deliver the failed task to the message queue, and wait for subsequent compensation processing;

Flexible operator and third-party library support:

Python is one of the most widely used languages in the field of data processing due to its rich support of third-party libraries for statistics and operations. The Python Runtime of Function Compute supports the packaging of third-party libraries, enabling you to quickly conduct prototype verification and test online.

Kafka ETL task processing example

Let's take simple ETL task processing as an example. The data source comes from Kafka. After function computing processing, the task execution results and upstream and downstream information are pushed to the message service MNS. See the source code of the function computing part of the project: https://github.com/awesome-fc/Stateful-Async-Invocation

resource preparation

Kafka resource preparation

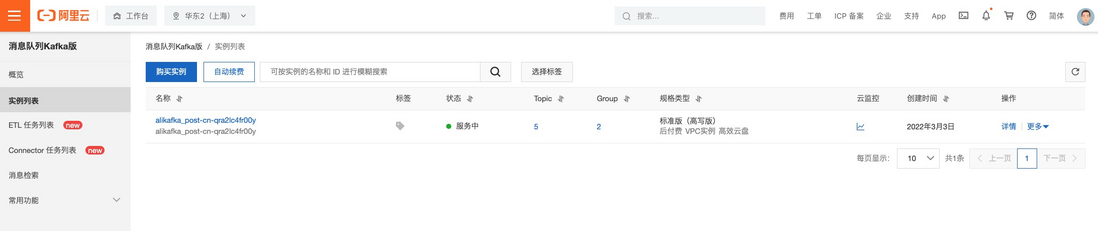

- Go to the Kafka console, click Buy Instance, and then deploy. Wait for the instance deployment to complete;

Enter the created instance and create a Topic for testing.

Targeted Resource Preparation (MNS)

Enter the MNS console and create two queues respectively:

- dead-letter-queue: Used as a dead letter queue. When the message processing fails, the execution context information will be posted here;

- fc-etl-processed-message: as the push target after the task is successfully executed.

After the creation is complete, as shown in the following figure:

deploy

Download and install Serverless Devs:

npm install @serverless-devs/sFor detailed documentation, please refer to the Serverless Devs installation documentation

Configure key information:

s config addFor detailed documentation, please refer to Alibaba Cloud Key Configuration Documentation

- Enter the project, modify the target ARN in the s.yaml file to the MNS queue ARN created above, and modify the service role to an existing role;

Deployment:

s deploy -t s.yamlConfigure ETL tasks

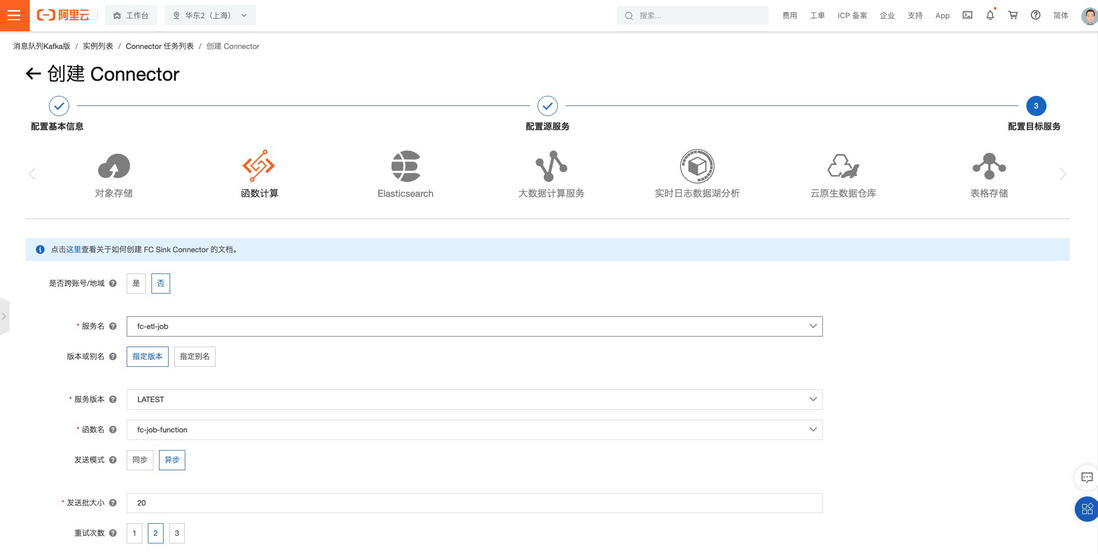

- Go to the kafka console - connector task list tab and click Create Connector;

- After configuring the basic information and topic of the source, configure the target service. Here we choose function computation as the target

Entering the Function Compute asynchronous configuration page, we can see that the current configuration is as follows:

Test ETL tasks

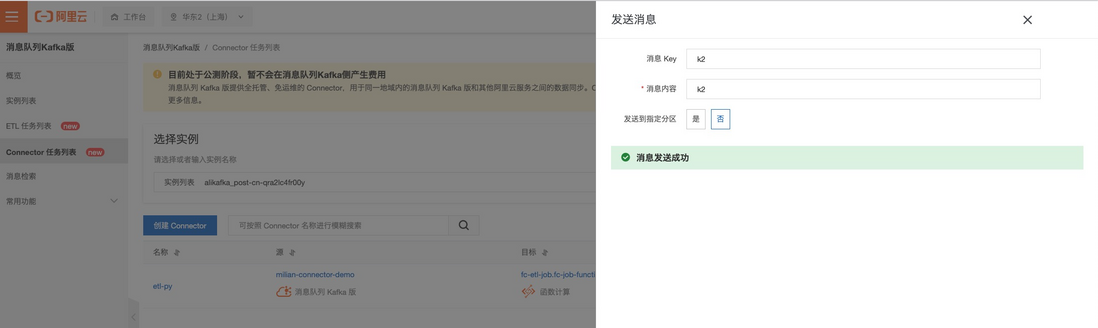

- Go to the kafka console - connector task list tab, click Test; after filling in the message content, click Send

- After sending multiple messages, go to the function console. We can see that there are multiple messages in execution. At this point, we choose to use the method of stopping the task to simulate a task execution failure:

- Entering the message service MNS console, we can see that there is an available message in the two previously created queues, representing the content of an execution and a failed task:

Entering the queue details, we can see the contents of two messages. Take the successful message content as an example:

{ "timestamp":1646826806389, "requestContext":{ "requestId":"919889e7-60ff-408f-a0c7-627bbff88456", "functionArn":"acs:fc:::services/fc-etl-job.LATEST/functions/fc-job-function", "condition":"", "approximateInvokeCount":1 }, "requestPayload":"[{\"key\":\"k1\",\"offset\":1,\"overflowFlag\":false,\"partition\":5,\"timestamp\":1646826803356,\"topic\":\"connector-demo\",\"value\":\"k1\",\"valueSize\":4}]", "responseContext":{ "statusCode":200, "functionError":"" }, "responsePayload":"[\n {\n \"key\": \"k1\",\n \"offset\": 1,\n \"overflowFlag\": false,\n \"partition\": 5,\n \"timestamp\": 1646826803356,\n \"topic\": \"connector-demo\",\n \"value\": \"k1\",\n \"valueSize\": 4\n }\n]" }In it, we can see the original content returned by the function in the "responsePayload" Key. In general, we will return the result of data processing as a response, so in subsequent processing, you can get the processed result by reading "responsePayload".

The "requestPayload" key is the original content of the function calculation triggered by Kafka. By reading the content of this data, the original data can be obtained.Best Practices for Function Compute Asynchronous Tasks - Audio and Video Processing

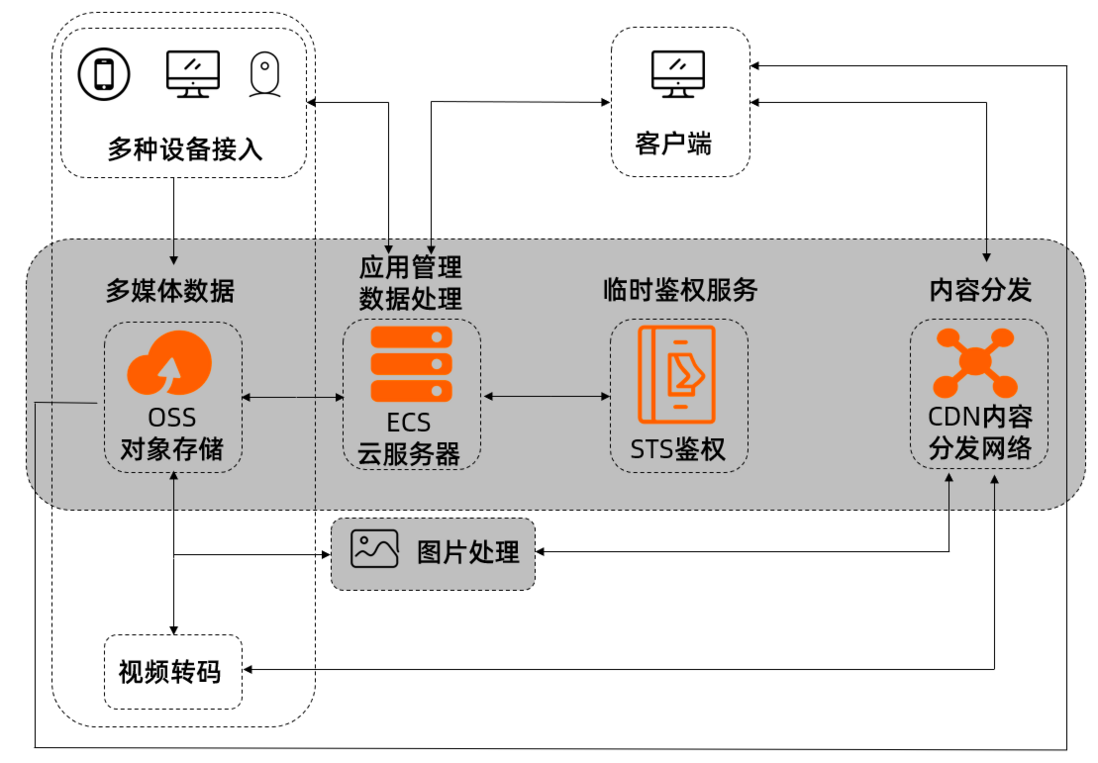

With the development of computer technology and network, video-on-demand technology is favored by education, entertainment and other industries because of its good human-computer interaction and streaming media transmission technology. At present, the product lines of cloud computing platform manufacturers continue to mature and improve. If you want to build video-on-demand applications, going directly to the cloud will clear various obstacles such as hardware procurement and technology. Taking Alibaba Cloud as an example, typical solutions are as follows:

In this solution, the object storage OSS can support massive video storage, and the collected and uploaded videos are transcoded to adapt to various terminals and CDNs to accelerate the speed of video playback on terminal devices. In addition, there are some content security review requirements, such as anti-jam, anti-terrorism, etc.

Audio and video are typical long-term processing scenarios and are very suitable for using function computing tasks.

Audio and video processing needs

In VOD solutions, video transcoding is the subsystem that consumes the most computing power. Although you can use specialized transcoding services on the cloud, in some scenarios, you still choose to build your own transcoding services, such as :

- More flexible video processing services are needed. For example, a set of video processing services has been deployed based on FFmpeg on a virtual machine or container platform, but on this basis, we want to improve resource utilization and achieve fast elasticity and stability with obvious peaks and valleys and sudden traffic increases;

- Need to quickly process multiple oversized videos in batches. For example, hundreds of large videos of 1080P or more than 4 GB are regularly generated every Friday, and each task may be executed for several hours;

- For video processing tasks, you want to grasp the progress in real time; in some cases, you need to log in to the instance to troubleshoot problems or even stop the tasks in progress to avoid resource consumption.

Serverless Task support for audio and video scenes

The above demands are typical task scenarios. Since such tasks often have the characteristics of peaks and valleys, how to operate and maintain computing resources and reduce their costs as much as possible, the workload of this part is even larger than the workload of the actual video processing business. Serverless Task is a product form that was born to solve such scenarios. With Serverless Task, you can quickly build a video processing platform with high elasticity, high availability, and low-cost operation and maintenance.

In this scenario, the main capabilities of Serverless Task we will use are as follows:

- Free operation and maintenance & low cost: computing resources can be used as needed, and no payment is required when not used;

- Long-term execution task load-friendly: a single instance supports a maximum execution time of 24h;

- Task deduplication: Supports error compensation on the trigger side. For a single task, Serverless Task can achieve the ability to automatically deduplicate, and the execution is more reliable;

- Task observable: all tasks in execution, successful execution, and execution failure can be traced back and can be queried; support task execution history data query, task log query;

- The task is actionable: you can stop and retry the task;

- Agile development & testing: Officially supports the S tool for automated one-click deployment; supports the ability to log in to the running function instance, you can directly log in to the instance to debug third-party programs such as ffmpeg, what you see is what you get.

Serverless - FFmpeg video transcoding

Project source code: https://github.com/devsapp/start-ffmpeg/tree/master/transcode/src

deploy

Download and install Serverless Devs:

npm install @serverless-devs/sFor detailed documentation, please refer to the Serverless Devs installation documentation

Configure key information:

s config addFor detailed documentation, please refer to Alibaba Cloud Key Configuration Documentation

- Initialization project:

s init video-transcode -d video-transcode - Enter the project and deploy:

cd video-transcode && s deploy

Call functions

- Initiate 5 asynchronous task function calls

$ s VideoTranscoder invoke -e '{"bucket":"my-bucket", "object":"480P.mp4", "output_dir":"a", "dst_format":"mov"}' --invocation-type async --stateful-async-invocation-id my1-480P-mp4

VideoTranscoder/transcode async invoke success.

request id: bf7d7745-886b-42fc-af21-ba87d98e1b1c

$ s VideoTranscoder invoke -e '{"bucket":"my-bucket", "object":"480P.mp4", "output_dir":"a", "dst_format":"mov"}' --invocation-type async --stateful-async-invocation-id my2-480P-mp4

VideoTranscoder/transcode async invoke success.

request id: edb06071-ca26-4580-b0af-3959344cf5c3

$ s VideoTranscoder invoke -e '{"bucket":"my-bucket", "object":"480P.mp4", "output_dir":"a", "dst_format":"flv"}' --invocation-type async --stateful-async-invocation-id my3-480P-mp4

VideoTranscoder/transcode async invoke success.

request id: 41101e41-3c0a-497a-b63c-35d510aef6fb

$ s VideoTranscoder invoke -e '{"bucket":"my-bucket", "object":"480P.mp4", "output_dir":"a", "dst_format":"avi"}' --invocation-type async --stateful-async-invocation-id my4-480P-mp4

VideoTranscoder/transcode async invoke success.

request id: ff48cc04-c61b-4cd3-ae1b-1aaaa1f6c2b2

$ s VideoTranscoder invoke -e '{"bucket":"my-bucket", "object":"480P.mp4", "output_dir":"a", "dst_format":"m3u8"}' --invocation-type async --stateful-async-invocation-id my5-480P-mp4

VideoTranscoder/transcode async invoke success.

request id: d4b02745-420c-4c9e-bc05-75cbdd2d010f 2. Log in to the FC console

You can clearly see the execution of each transcoding task:

- A When did the video start transcoding, and when did the transcoding end

- B The video transcoding task is not as expected, I can click to stop the call in the middle

- By calling status filtering and time window filtering, I can know how many tasks are currently being executed and what is the historical completion status

- Can trace the execution log of each transcoding task and trigger the payload

- When there is an exception in your transcoding function, it will trigger the execution of the dest-fail function. You can add your custom logic to this function, such as an alarm

After transcoding, you can also log in to the OSS console and go to the specified output directory to view the transcoded video.

When using the project locally, you can not only deploy, but also perform more operations, such as viewing logs, viewing metrics, and debugging in various modes. For details of these operations, please refer to the Function Compute component command documentation

For more content, pay attention to the Serverless WeChat official account (ID: serverlessdevs), which brings together the most comprehensive content of serverless technology, regularly holds serverless events, live broadcasts, and user best practices.

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。