statement

All the content in this article is for learning and communication only. The captured content, sensitive URLs, and data interfaces have been desensitized, and it is strictly forbidden to use them for commercial or illegal purposes. Otherwise, all consequences arising therefrom will have nothing to do with the author. Infringement, please contact me to delete it immediately!

Reverse target

- Goal: A government service network —> Government-civilian interaction —> I want to consult

- Homepage: aHR0cDovL3p3Zncuc2FuLWhlLmdvdi5jbi9pY2l0eS9pY2l0eS9ndWVzdGJvb2svaW50ZXJhY3Q=

- Interface: aHR0cDovL3p3Zncuc2FuLWhlLmdvdi5jbi9pY2l0eS9hcGktdjIvYXBwLmljaXR5Lmd1ZXN0Ym9vay5Xcml0ZUNtZC9nZXRMaXN0

Reverse parameters:

- Request Headers:

Cookie: ICITYSession=fe7c34e21abd46f58555124c64713513 - Query String Parameters:

s=eb84531626075111111&t=4071_e18666_1626075203000 - Request Payload:

{"start":0,"limit":7,"TYPE@=":"2","OPEN@=":"1"}

- Request Headers:

Reverse process

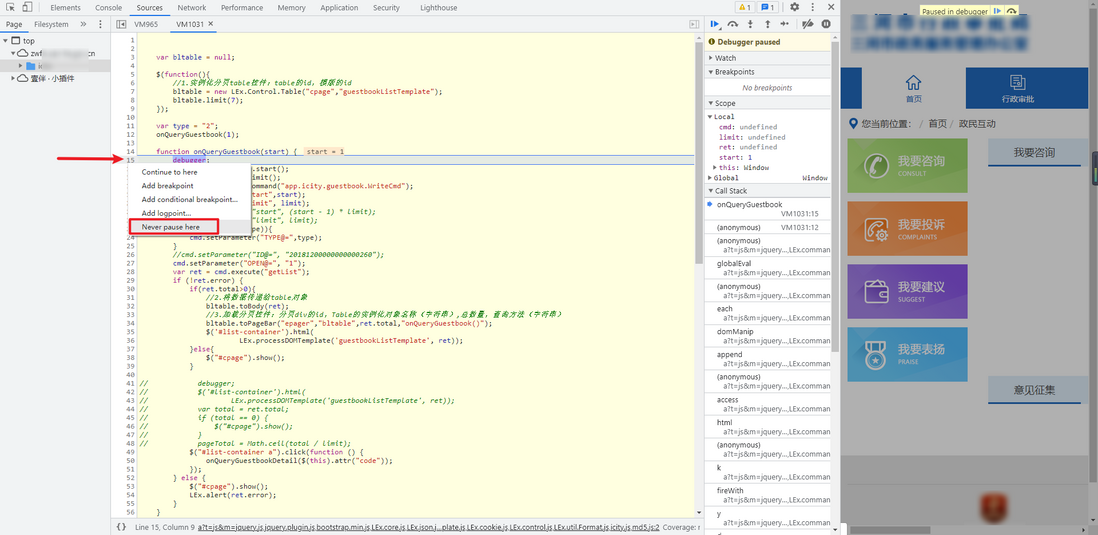

Bypass unlimited debugger

We try to capture the package, open the developer tool, refresh the page, and we will find that the page is broken to the position of the debugger at this time, and click Next, it will be broken to the position of another debugger. In this case, it is unlimited debugger, unlimited debugger. The meaning of existence is to prevent some people from debugging, but in fact, the way to bypass the unlimited debugger is very simple and there are many ways. The following introduces several commonly used bypass methods.

1.Never pause here

In the debugger position, click the line number, right-click Never pause here, and just stop here:

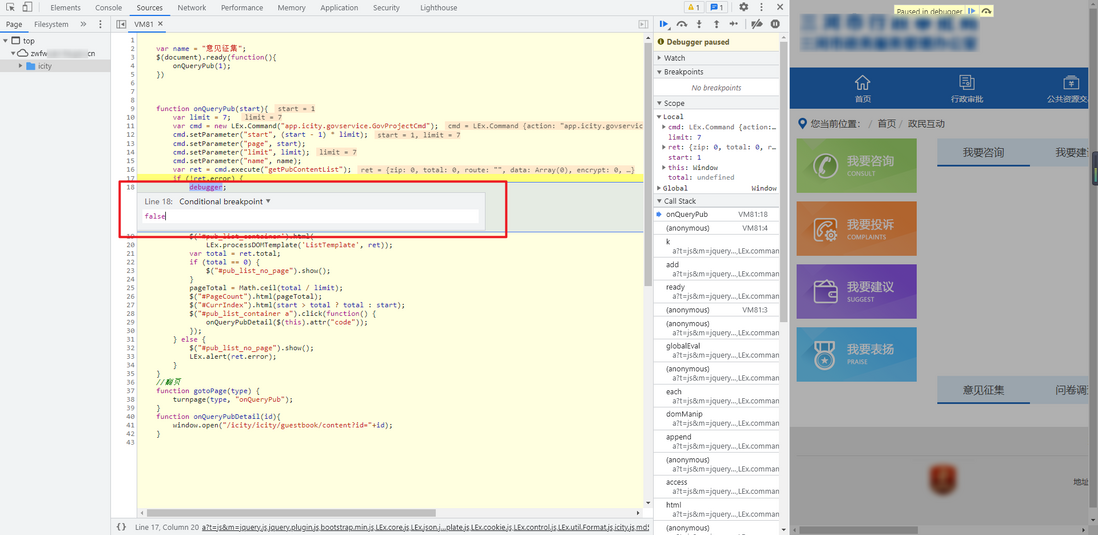

2. Add conditional breakpoint

Similarly, right-click and select Add conditional breakpoint, and enter false to skip the infinite debugger. The principle is to add a conditional breakpoint. No matter what the logic of the previous code is, it must be true when it runs to the debugger. You only need to change it to false , Then it is not executed:

3. Man-in-the-middle intercepts and replaces unlimited debug functions

The so-called intermediary interception and replacement means that the civet cat changes the prince and replaces the original function containing the infinite debugger. This method is suitable for knowing the specific JS file where the infinite debugger function is located, and rewriting the JS file so that it does not contain the function of the infinite debugger. , Use third-party tools to replace the original JS files with rewritten files. There are many such tools, such as the browser plug-in ReRes, which can map requests to other URLs or to the local machine by specifying rules Files or directories can also be replaced by the Auto responder function of the packet capture software Fidder.

4. Leave the method blank

Rewriting the function of the infinite debugger directly in the Console can also crack the infinite debugger. The disadvantage is that it becomes invalid after refreshing, and it is basically not commonly used.

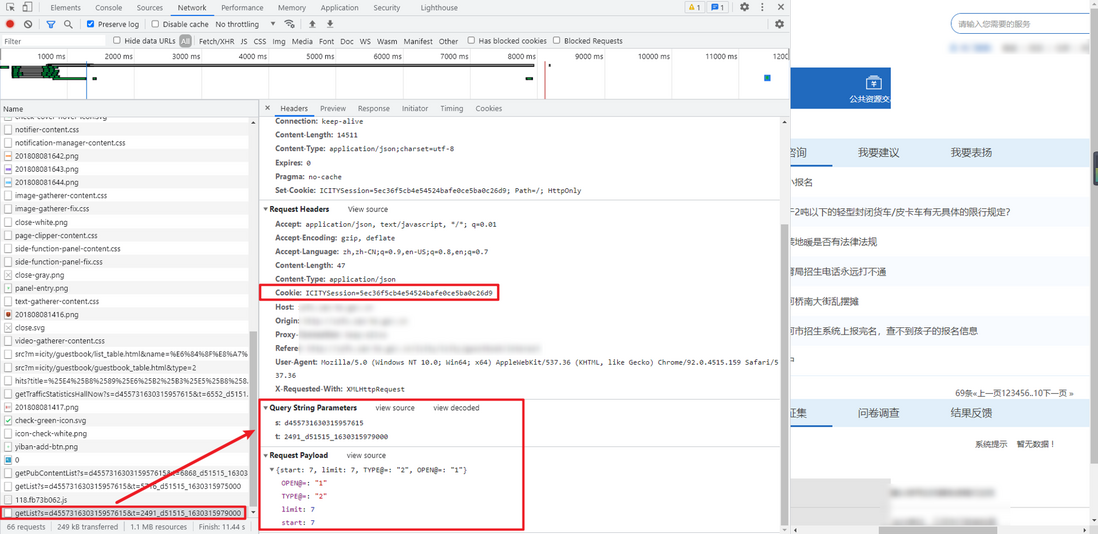

Packet capture analysis

After bypassing the unlimited debugger, click on the next page for packet capture analysis. The data interface is similar to: http://zwfw.xxxxxx.gov.cn/icity/api-v2/app.icity.guestbook.WriteCmd/getList?s=d455731630315957615&t=2491_d51515_1630315979000 . The parameters of Cookie, Query String Parameters and Request Payload need to be solved by us.

Parameter reverse

The first is Cookie. Search directly. You can find that the cookie value is set in Set-Cookie in the request of the homepage. Then use the get method to request the homepage, and you can get the Cookie directly in the response:

After observing the parameters of Request Payload, it can be found that start is +7 per page, and other parameters remain unchanged

The two parameters s and t of Query String Parameters are obtained after JS encryption.

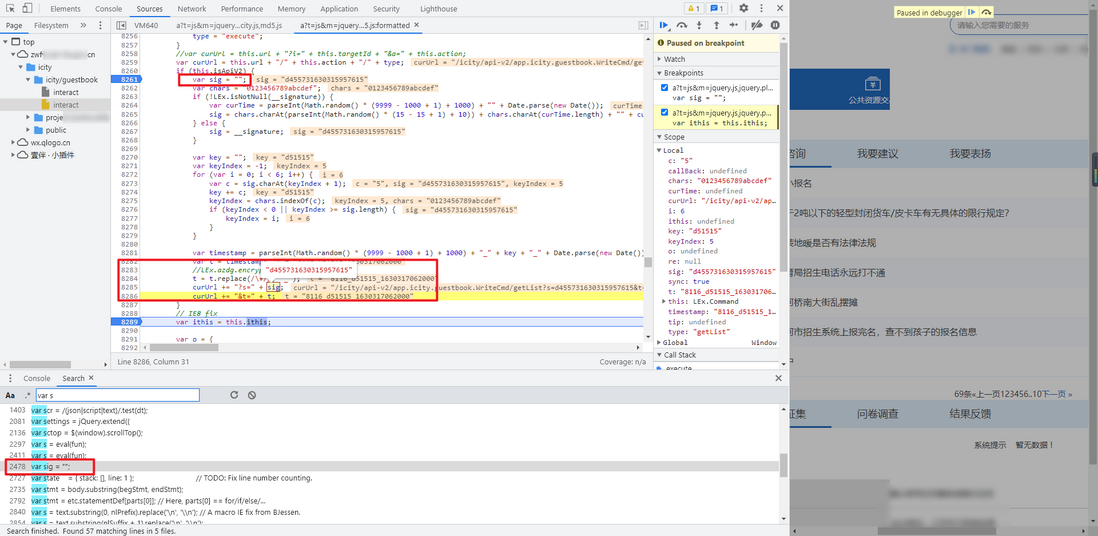

Global search for the s parameter, because there are too many s, you can try to search var s , you can find a var sig , there are two more obvious statements after this function: curUrl += "?s=" + sig; curUrl += "&t=" + t; , it is not difficult to see that it is a URL splicing statement, the s parameter is sig , Bury the breakpoint, you can see that it is the parameter we are looking for:

LEx.isNotNull this function for local debugging, you will find that LEx is not defined. Just follow the function 06135c3821773d and copy the original function:

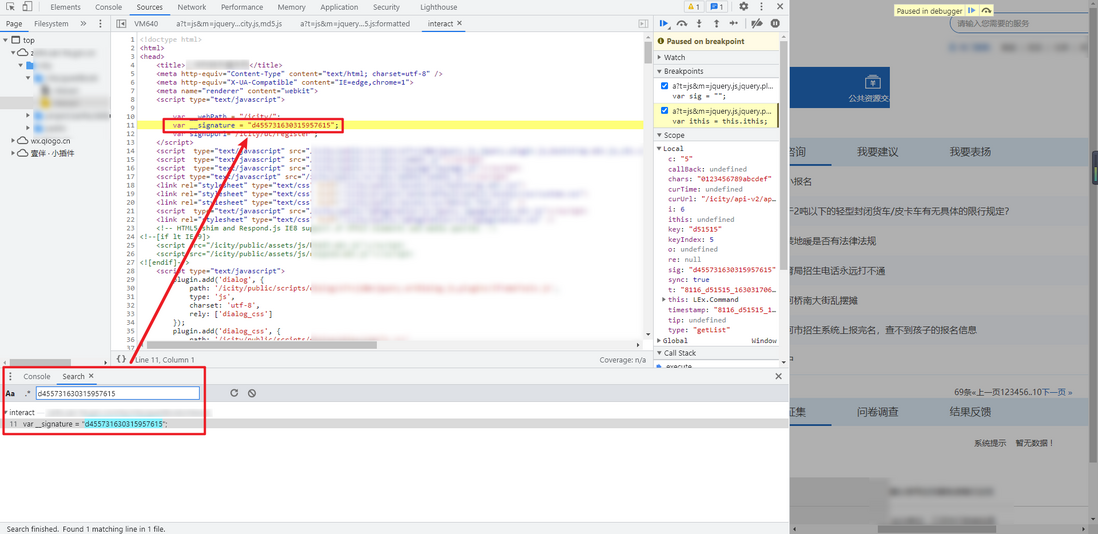

Debugging again, you will be prompted that the __signature is not defined. A global search finds that this value can be found in the HTML of the homepage, just extract the regular expression directly.

Complete code

GitHub pays attention to K brother crawler, and continues to share crawler-related code! Welcome star! https://github.com/kgepachong/

following 16135c382177b8 only demonstrates part of the key code and cannot be run directly! complete code warehouse address: https://github.com/kgepachong/crawler/

JS encryption code

isNotNull = function (obj) {

if (obj === undefined || obj === null || obj == "null" || obj === "" || obj == "undefined")

return false;

return true;

};

function getDecryptedParameters(__signature) {

var sig = "";

var chars = "0123456789abcdef";

if (!isNotNull(__signature)) {

var curTime = parseInt(Math.random() * (9999 - 1000 + 1) + 1000) + "" + Date.parse(new Date());

sig = chars.charAt(parseInt(Math.random() * (15 - 15 + 1) + 10)) + chars.charAt(curTime.length) + "" + curTime;

} else {

sig = __signature;

}

var key = "";

var keyIndex = -1;

for (var i = 0; i < 6; i++) {

var c = sig.charAt(keyIndex + 1);

key += c;

keyIndex = chars.indexOf(c);

if (keyIndex < 0 || keyIndex >= sig.length) {

keyIndex = i;

}

}

var timestamp = parseInt(Math.random() * (9999 - 1000 + 1) + 1000) + "_" + key + "_" + Date.parse(new Date());

var t = timestamp;

//LEx.azdg.encrypt(timestamp,key);

t = t.replace(/\+/g, "_");

return {"s": sig, "t": t};

}

// 测试样例

// console.log(getDecryptedParameters("c988121626057020055"))Python code

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

import re

import execjs

import requests

index_url = '脱敏处理,完整代码关注 GitHub:https://github.com/kgepachong/crawler'

data_url = '脱敏处理,完整代码关注 GitHub:https://github.com/kgepachong/crawler'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'}

session = requests.session()

def get_encrypted_parameters(signature):

with open('encrypt.js', 'r', encoding='utf-8') as f:

js = f.read()

encrypted_parameters = execjs.compile(js).call('getDecryptedParameters', signature)

return encrypted_parameters

def get_signature_and_cookies():

response = session.get(url=index_url, headers=headers)

cookies = response.cookies.get_dict()

cookie = cookies['ICITYSession']

signature = re.findall(r'signature = "(.*)"', response.text)[0]

return cookie, signature

def get_data(cookie, parameters, page):

payload_data = {'start': page*7, 'limit': 7, 'TYPE@=': '2', 'OPEN@=': '1'}

params = {'s': parameters['s'], 't': parameters['t']}

cookies = {'ICITYSession': cookie}

response = session.post(url=data_url, headers=headers, json=payload_data, params=params, cookies=cookies).json()

print(payload_data, response)

def main():

ck, sig = get_signature_and_cookies()

for page in range(10):

# 采集10页数据

param = get_encrypted_parameters(sig)

get_data(ck, param, page)

if __name__ == '__main__':

main()

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。