Overview

Distributed transactions are used to solve the problem of cross-database and cross-service update data consistency. So what does the consistency here mean, what is strong consistency, and what is weak consistency, is it the same as the concept of consistency in CAP theory? This article will provide you with in-depth answers to related questions.

What does consistency mean

In the theory of databases, transactions have ACID features that everyone is familiar with, as follows:

- Atomicity (atomicity): All operations in a transaction are either completed or not completed at all, and will not end in an intermediate link. If an error occurs during the execution of the transaction, it will be restored to the state before the transaction started, as if the transaction had never been executed.

- Consistency (consistency): Before the start of the transaction and after the end of the transaction, the integrity of the database has not been destroyed. Integrity, including foreign key constraints, application-defined constraints, etc. will not be destroyed.

- Isolation (Isolation): The ability of the database to allow multiple concurrent transactions to read, write and modify its data at the same time. Isolation can prevent data inconsistencies caused by cross execution when multiple transactions are executed concurrently.

- Durability: After the transaction is completed, the modification of the data is permanent and will not be lost even if the system fails.

For the C (consistency) inside, we explain it with a very specific business example. Suppose we are dealing with a transfer business, suppose A transfers 30 yuan to B. With the support of local affairs, our users see the total amount of A+B. Before and after the transfer, as well as during the transfer process, it is not maintained. changing. At this time, the user thinks that the data he sees is consistent and conforms to business constraints.

When our business becomes complex and introduces multiple databases and a large number of microservices, the consistency of the above-mentioned local transactions is still a business that is very concerned. If a business update operation is cross-database or cross-service, then the consistency problem that the business cares about at this time becomes a consistency problem in distributed transactions.

In a stand-alone local transaction, the total amount of A+B can be checked at any time (at the common ReadCommitted or ReadRepeatable isolation level), which is constant, that is, the consistency of business constraints has always been maintained, which we call For strong consistency.

Unable to achieve strong consistency

At present, in cross-database and cross-service distributed practical applications, no strong consistency scheme has been seen.

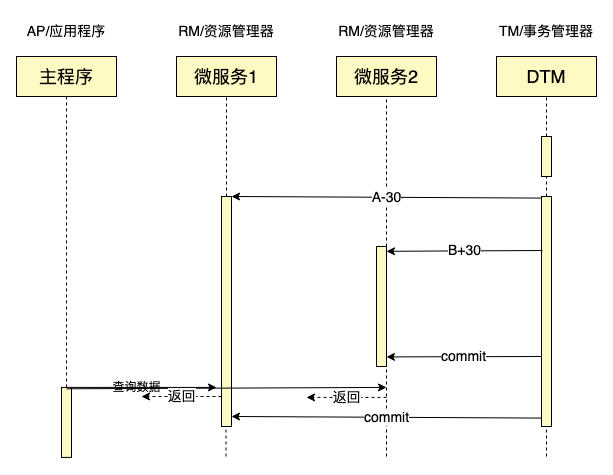

Let's see if the XA transaction with the highest consistency level is strongly consistent. Let's take cross-bank transfer (here, we use cross-database update AB to simulate) as an example to illustrate. The following is a sequence diagram of an XA transaction:

In this sequence diagram, we initiate a query at the time shown in the figure, then the data we found will be A+B+30, which is not equal to A+B, and does not meet the requirements of strong consistency.

Strong theoretical consistency

Let's consider next that ordinary XA transactions are not strongly consistent, but if performance factors are not considered at all, is it possible to achieve strong consistency in theory:

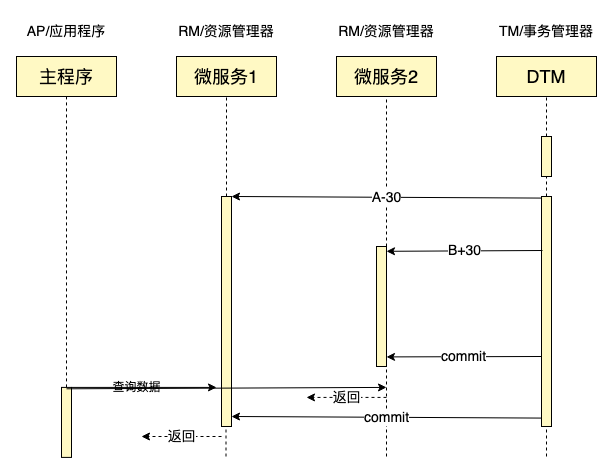

Let's first see if we set the isolation level of the database involved in the XA transaction to Serializable, can we achieve a strong consistency? Let's take a look at the previous timing scenario:

In this case, the result is found to be equal to A+B, but there are problems in other scenarios, as shown in the following figure:

According to the time sequence in the figure, the result of the query is: A+B-30, which is still inconsistent.

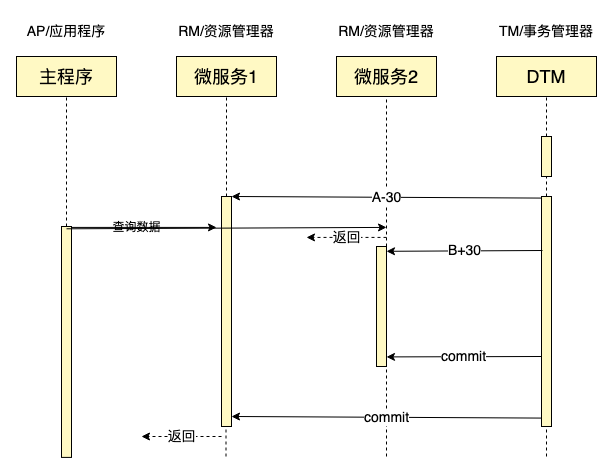

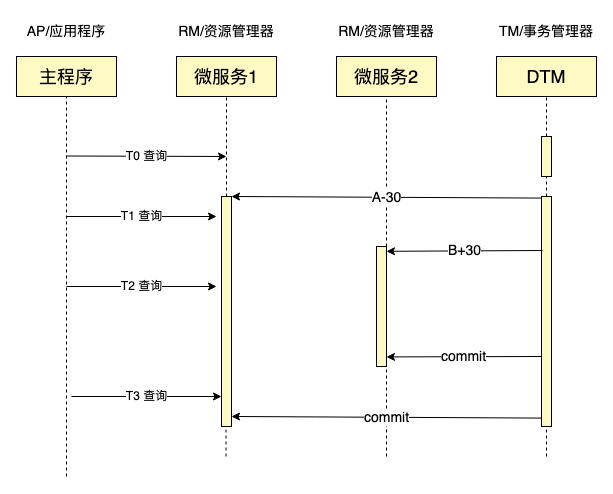

After thinking deeply about this issue of strong consistency, there is a way to achieve strong consistency, which is as follows:

- For query, XA transaction is also used, and when querying data, select for update is used. After all the data is checked, xa commit

- In order to avoid deadlocks, the involved databases need to be sorted, and all access data must be written and queried in the same database order

Under the above strategy, we can see that the results obtained by querying at any point in the sequence diagram are A+B

- Query at T0 time, then the modification must occur after the query is completed, so the query will get the result A+B

- In T1, T2, T3 query, then the query result returned must all occur after the modification is completed, so the query result is also A+B

Obviously this kind of strong theoretical consistency and extremely low efficiency. All database transactions with data intersections are executed serially, and data needs to be queried/modified in a specific order, so the cost is extremely high, and it is almost impossible to apply in production .

NewSQL's strong consistency

We discussed that cross-database and cross-microservice distributed transactions cannot achieve strong consistency. In fact, there is also a transaction within distributed data. Because transactions cross nodes, they are also called distributed transactions. This kind of distributed transaction can achieve strong consistency. This kind of strong consistency is achieved through MVCC technology. The principle is similar to that of a stand-alone database, but it is much more complicated. The detailed implementation method can refer to Google's

Is it possible in the future to learn from NewSQL's approach to achieve strong consistency in distributed transactions such as cross-databases and cross-microservices? In theory it is possible.

- It is relatively simple to implement distributed transaction consistency across services but not across libraries. One way is to implement the TMRESUME option in XA transactions (because there is only one xa commit in the end, there will be no inconsistent time between two xa commits window).

- Achieving cross-database distributed transaction consistency will be a lot more difficult, because the internal version mechanism of each database is different, and it is very difficult to collaborate.

Weakly consistent classification

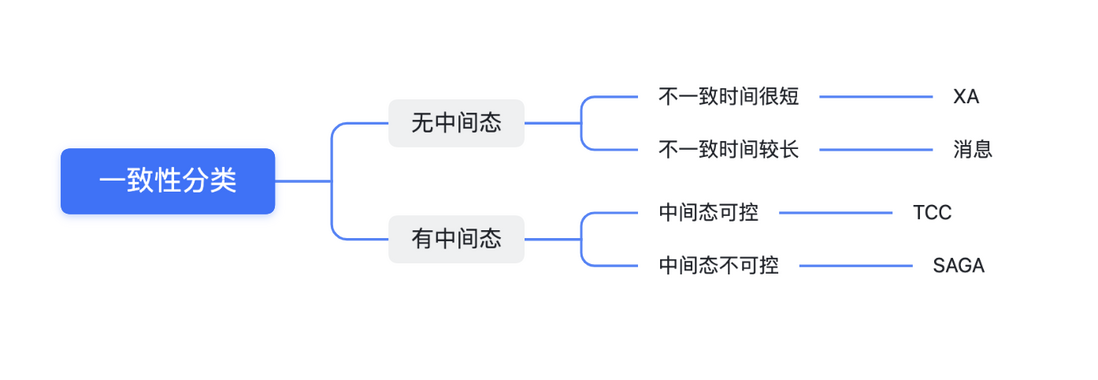

Since all existing distributed transaction schemes cannot achieve strong consistency, is there a difference between weak consistency? We have made the following classifications of consistency:

The consistency from strong to weak is:

XA transaction>message>TCC>SAGA

The message here refers to the type of distributed transaction of the local message table. For the introduction of these four distributed transactions, see The seven most classic solutions for distributed transactions

Their classification is:

- No intermediate state: The data has only two states, before and after the transaction, there is no other third state. XA and messages are both of this

- There is an intermediate state: data has an intermediate state, such as TCC’s Try, the data state is different from before and after the transaction; SAGA also has an intermediate state, if a SAGA transaction performs a forward operation after the data is W, then it is rolled back, then W It is also different from the state before and after the transaction.

- XA: Although XA is not strongly consistent, the consistency of XA is the best among a variety of distributed transactions, because it has been in an inconsistent state for a short period of time, and only some branches start committing, but not all commits. In the time window, the data is inconsistent. Because the commit operation of the database takes time, usually within 10ms, the inconsistency window period is very short.

- Message: After the first operation is completed, the time window of the message type is inconsistent before all operations are completed, and the duration is generally longer than XA.

- TCC: The intermediate state of TCC, usually controllable and can be customized. Under normal circumstances, this part of the data is not displayed to the user, so the consistency is better than the following SAGA.

- SAGA: If a rollback occurs in SAGA, and the data modified by the forward operation in the sub-transaction will be seen by the user, it may bring a poor experience to the user, so the consistency is the worst.

Our classification here is only summarized from the dimensions we care about, and is suitable for most scenarios, but not necessarily for all situations. In actual applications, it may also appear that the consistency of TCC is better than the message. For example, I execute xa prepare in Try, xa commit in Confirm, and xa rollback in Cancel. Under this implementation, the consistency of TCC is better. Like XA, consistency is actually higher than XA.

Consistency in CAP theory

The consistency we discuss here refers to the concept of consistency in the database, which is different from the consistency in CAP.

- The strong consistency in CAP means that the user reads immediately after writing in the distributed system. If the latest version can be read like a local read and write, then it is a strong consistency.

- Strong consistency in distributed transactions means that the data read by users always meets the business constraints during the transaction process, and current solutions in actual applications cannot achieve strong consistency.

The strong consistency of the above two is different in specific meanings, but from the user's perspective, there is also a commonality, that is, whether it can be like a stand-alone system without worrying about new problems brought about by distributed.

Readers usually have another question, that is, distributed transaction is a distributed system, so what is the consistency in CAP?

At present, distributed consensus protocols such as Paxos/Raft have been maturely implemented in the industrial field. When machine failures or network isolation are encountered, a new leader can be elected within a few hundred milliseconds to a few seconds. In recovery. That is to say, in CAP, choose CP, and there is only about a few hundred milliseconds of unavailable time on A.

Therefore, for data-sensitive applications such as NewSQL or distributed transactions, the CP in the CAP is generally selected at the expense of A in a few hundred milliseconds. Therefore, in this respect, distributed transactions are strongly consistent in CAP. For example, our dtm distributed transaction framework saves the global transaction progress in the CP database (most cloud vendors provide the CP database)

Summarize

This paper analyzes the consistency-related issues in distributed transactions in detail. After confirming that there is no strong consistency solution, it analyzes the weak consistency classification and theoretically possible strong consistency solutions.

Many of the contents of this article are originals. If there are any ill-considerations, readers are welcome to discuss them in the comment section.

- Our public number: distributed transactions

- Our project: https://github.com/yedf/dtm

- Welcome to use dtm, or learn and practice distributed transaction related knowledge through dtm, welcome star to support us

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。