Source: https://learn.ibm.com/course/...

1. Introduction to Containers

1.1 What is a container?

A container is an executable unit of software in which application code is packaged, along with its libraries and dependencies, in common ways so that it can be run anywhere, whether on a desktop, on-premises, or in the cloud.

To do this, containers take advantage of a form of operating system virtualization in which features of the operating system are leveraged to both isolate processes and control the amount of CPU, memory, and disk storage that those processes have access to. In the case of the Linux kernel, namespaces and cgroups are the operating system primitives that enable this virtualization.

Containers first appeared decades ago, but the modern container era began in 2013 with the introduction of Docker.

1.2 Benefits of a container

- Lightweight - eliminating the need for a full operating system instance per application

- Portable - carry all their dependencies with them

- Architecture - ideal fit for modern development and application patterns that expect regular deployments of incremental changes

- Utilization - enable developers and operators to improve the CPU and memory utilization of physical machines

1.3 Virtual Machine vs Container

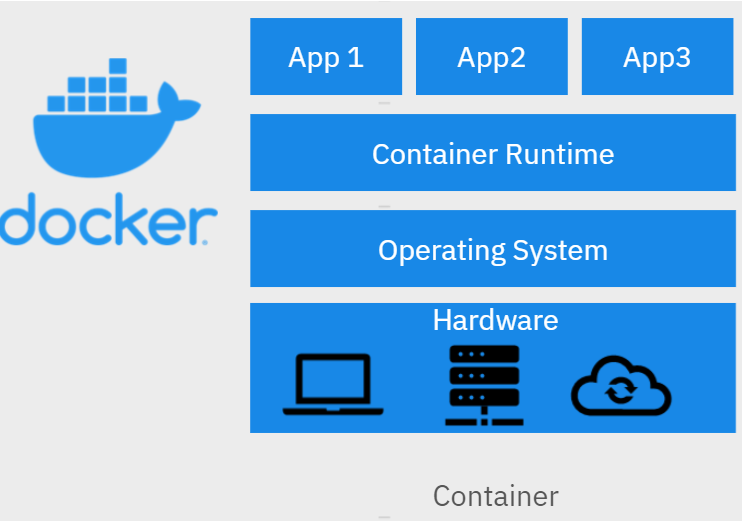

- Containers run on a container engine rather than directly at the operating system.

- Containers can start up much faster than virtual machines.

- There are three states a container can be in: waiting, running, and terminated.

1.4 Docker

Docker is an open-source software platform for building and running applications as containers.

Container runtime is software that executes containers. Docker is a container runtime.

Docker is likely the most popular and best-known container runtime, but Docker has also introduced the container runtime, which is gaining much popularity. Other runtimes include cri-o, the container engine included in OCP, and also rkt.

Docker Command Line Interface (CLI):

- BUILD: Create container images

- RUN: Test an image on local computer to ensure it is running correctly

- PUSH: Store images in a remote location

- PULL: Retrieve images that stored in a remote location

- IMAGE: List all the images, their repositories, tags and sizes.

- TAG: Copy an existing image and give the copy a new name

1.5 Building Container Images

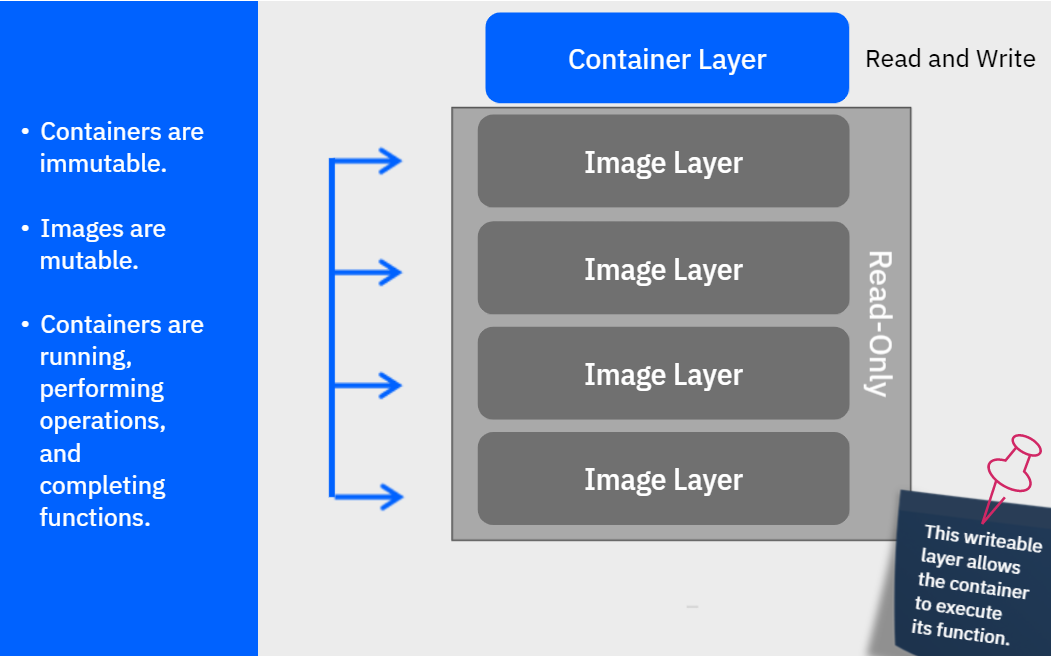

An image (also known as a container image) is a template for the content of a container.

Images are then built layer by layer, with each Docker instruction creating a new layer on top of the existing layers.

Docker can automatically build container images by using the instructions from a Dockerfile.

A Dockerfile is just a text file that contains all the commands a user would call on the command line to create the image. Docker provides a set of instructions that are used to make this process a bit more straightforward.

Dockerfile Instructions:

- FROM

- RUN

- ENV

- ADD and COPY: put your application code or executable into your image. COPY can only copy local files or directories, while ADD can also add files from remote URLs.

- CMD: provide a default mechanism for executing a container

./app meaning a directory named app in our working directory

Docker LAB

1.Save the below as a file (no type. I saved as yaml and didn't work) named "Dockerfile" to a certain location

FROM node:9.4.0-alpine

COPY app.js .

COPY package.json .

RUN npm install &&\

apk update &&\

apk upgrade

CMD node app.js2.Run the below command, not working

need to cd to the location of the Dockerfile

doesn't work because we don't have the javascript file and json file in our working directory

3.Try to remove the COPY lines in the file, make it as below

FROM node:9.4.0-alpine

RUN npm install &&\

apk update &&\

apk upgrade

CMD node app.jsNow it works

4.Check by command "docker images", you can see my-app in the repository

5.Try to run it by command "docker run my-app:v1", not working, because we removed app.js

6.Delete the image

1.6 Registry

A container registry is used for the storage and distribution of named container images.

Registries store named images, and the Docker build and tag commands can be used to name images.

In general, there are three parts to an image name:

- Hostname: hostname/repository:tag, identifies registry to which image is pushed, docer hub = docker.io, IBM Cloud Container Registry = us.icr.io

- Repository: docer.io/ubuntu:18.04, group of related container images, usually different versions of same application, good name includes name of application or service

- Tag

Note: Kubernetes is an open-source container-orchestration system for automating application deployment, scaling, and management. It was originally designed by Google, and is now maintained by the Cloud Native Computing Foundation.

2. Introduction to Kubernetes

2.1 Container orchestration

- Aids in the provisioning and deployment of containers to make this a more automated, unified, and smooth process.

- Ensures that containers are redundant and available so that applications experience minimal downtime.

- Scales containers up and down to meet demand, and it load balances requests across instances so that no one instance is overwhelmed.

- Handles the scheduling of containers to underlying infrastructure.

- Performs health checks to ensure that applications are running, and takes necessary actions when checks fail.

2.2 What is Kubernetes

Kubernetes is a portable, extensible, open-source platform for managing containerized workloads and services that facilitates both declarative configuration and automation. It has a large, rapidly growing ecosystem. Kubernetes services, support, and tools are widely available.

Kubernetes is sometimes abbreviated as "k8s."

- Open source

- Container orchestration platform

- Facilitates declarative management

- Processes a growing ecosystem

- Widely available

What Kubernetes is Not:

- Not a traditional, all-inclusive platform as a service (PaaS)

- Does not limit the types of applications

- Does not deploy source code or build applications

- Does not prescribe logging, monitoring, or alerting solutions

- Does not provide built-in middleware, databases, or other services

What does Kubernetes do:

- Aids in the provisioning and deployment of containers to make this a more automated, unified and smooth process

- Perform health checks to ensure that applications are running, and take necessary actions when checks fail

- Ensures that containers are redundant and available so that applications experience minimal downtime

- Scales containers up and down to meet demand, and it load balances requests across instance so that no one instance is overwhelmed

- Handles the scheduling of containers to underlying infrastructure

2.3 Architecture

A deployment of Kubernetes is called a cluster. On the left side of this diagram is the control plane, which makes decisions about the cluster and detects and responds to events in the cluster. The right side includes the worker nodes.

An example of a decision made by the control plane is scheduling of workloads, and an example of responding to an event is creating new resources when an application is deployed.

- Kubernetes API Server: Exposes the Kubernetes API. All communication in the cluster utilizes this API. For example, the Kubernetes API server accepts commands to view or change the state of the cluster.

- ETCD: A highly available key value store that contains all the cluster data. When you tell Kubernetes to deploy your application, that deployment configuration is stored in etcd. Etcd is thus the source of truth for the state in a Kubernetes cluster, and the system works to bring the cluster state into line with what is stored in etcd.

- Kubernetes Scheduler: Assigns newly created Pods to nodes. This basically means that the kube-scheduler determines where your workloads should run within the cluster.

- Kubernetes Controller Manager: Runs all the controller processes that monitor the cluster state and ensure that the actual state of a cluster matches the desired state. You will learn more about controllers shortly.

- Cloud Controller Manager: Runs controllers that interact with the underlying cloud providers. Since Kubernetes is open source and would ideally be adopted by a variety of cloud providers and organizations, Kubernetes strives to be as cloud agnostic as possible. The cloud-controller-manager allows both Kubernetes and the cloud providers to evolve freely without introducing dependencies on the other.

- Nodes: The worker machines in a Kubernetes cluster. In other words, user applications are run on nodes. Nodes can be virtual or physical machines. Each node is managed by the control plane and is able to run Pods. Nodes are not created by Kubernetes itself, but rather by the cloud provider. This allows Kubernetes to run on a variety of infrastructures. The nodes are then managed by the control plane. The components on a node enable that node to run Pods.

- Pods: The simplest unit that you deploy in Kubernetes. A Pod represents a process running in your cluster; further, it represents a single instance of an application running in your cluster. Most often, a Pod wraps a single container.

- Kubelet: The most important component. This controller communicates with the kube-apiserver to receive new and modified pod specifications and ensures that those Pods are their associated containers are running as desired. The kubelet also reports to the control plane on health and status. In order to start a Pod, the kubelet uses the container runtime, the last component on the nodes.

- Kube-proxy: The container runtime is responsible for downloading images and running containers. Rather than providing a single container runtime, Kubernetes implements a Container Runtime Interface that permits pluggability of the container runtime.

A Control loop is a non-terminating loop that regulates the state of a system.

Controllers in Kubernetes work the same way, but they watch the state of a Kubernetes cluster and take action to ensure that the cluster’s state matches the desired state.

2.4 Kubernetes Objects

Kubernetes objects are persistent entities in Kubernetes, define the state of your cluster.

To work with these objects (create, update or delete) you use the Kubernetes API. Probably the most common way to do this is to use the kubectl command-line interface (CLI).

Kubernetes objects consist of two main fields:

- Object spec: provided by the user, the desired state for this object.

- Status: provided by Kubernetes, the actual current state of the object.

Examples of Kubernetes objects:

- Namespaces: Convenient way to virtualize a physical cluster, make one cluster appear to be several distinct clusters. Logical seperation of a cluster into virtual clusters. Suitable for multiple teams or projects. (cluster will automatically have several namespaces)

- Names: Each object has a name, must be unique for that resource type within that namespace.

- Labels: Key value pairs that can be attached to objects as identification, allow objects to be grouped and organized. (not uniquely identify a single object)

- Selectors: Core grouping primitive, identify a set of objects.

The Kubernetes Master is a collection of three processes that run on a single node in your cluster, which is designated as the master node. Those processes are:

- kube-apiserver: validates and configures data for the API objects, which include Pods, services, replication controllers, and others. The API server services REST operations and provides the frontend to the cluster’s shared state through which all other components interact.

- kube-controller-manager: is a daemon that embeds the core control loops shipped with Kubernetes. In applications of robotics and automation, a control loop is a non-terminating loop that regulates the state of the system. In Kubernetes, a controller is a control loop that watches the shared state of the cluster through the API server and makes changes attempting to move the current state towards the desired state.

- kube-scheduler: is a policy-rich, topology-aware, workload-specific function that significantly impacts availability, performance, and capacity. The scheduler needs to take into account individual and collective resource requirements, quality of service requirements, hardware/software/policy constraints, affinity and anti-affinity specifications, data locality, inter-workload interference, deadlines, and so on. Workload-specific requirements will be exposed through the API as necessary.

Basic Kubernetes objects:

- Pods: The simplest unit that you deploy in Kubernetes. A pod represents a process running in your cluster; further, it represents a single instance of an application running in your cluster. Most often, a pod wraps a single container, though in some cases a pod may encapsulate multiple tightly coupled containers that share resources. This is a more advanced use case; in general, consider a Pod as a wrapper for a single container. When using the kubectl CLI to create objects, you often provide a YAML file that defines the object or objects that you want to create. Here is an example of a YAML file that defines a simple pod.

Pod States inclues PENDING, RUNNING, SUCCEEDED, FAILED, UNKNOWN.

Pod vs Container: - ReplicaSets: A group of identical pods that are running. Application can be horizontally scaled by running replicas of a pod. ReplicaSets are the object used to do this.

- Deployments: An object that provides updates for both pods and ReplicaSets. Deployments run multiple replicas of an application by creating ReplicaSets and offering additional management capabilities on top of those ReplicaSets.

2.5 Kubectl CLI

Kubectl provides a myriad of functionality for working with Kubernetes clusters and managing the workloads that are running in a cluster.

There are many different types of commands available via kubectl, but let’s quickly discuss two key types of commands:

Imperative: Quickly create, Update, delete Kubernetes objects. Can be used during development, but not recommended for production systems (not audit trace, not flexible, limited options). For example, to create a pod runs a specific, nginx in this case, run:

kubectl run nginx --image nginxThis is a simple way as it only contains pod name and the image that should be run as a container.

You can also write a YAML file and apply it imperatively using the create command:kubectl create -f nginx.yamlDeclarative: Desired state, shared configuration file. Declarative management is only available when you’re using YAML config files. There’s no such thing as a declarative command. When you’re using declarative operations, you don’t tell Kubernetes what to do by providing a verb (create/replace). Instead, you use the single "apply" command and trust Kubernetes to work out the actions to perform. For example, this means apply all files under nginx directory.

kubectl apply -f nginx/This means apply file.yaml to deploy an application.

kubectl apply -f file.yaml

2.6 Using Kubernetes

See Kubernetes Lab Section for more.

3. Managing Applications with Kubernetes

3.1 ReplicaSets

* Deployment

Kubernetes Lab 14

* Enable Public Endpoint

Kubernetes Lab 15

There are some limitations with a single pod version application. You can work around these limitations with the use of a ReplicaSet.

A ReplicaSet is automatically created for you when you create a deployment. By default, a ReplicaSet replicates to one single pod, but that can be changed.

ReplicaSet from Scratch without a deployment

Scale Existing Deployment

list all the pods and then delete one.

3.2 Autoscaling

Horizontal Pod Autoscaler (HPA) enables applications to increase the number of pods based on traffic.

Autoscale a deployment or a ReplicaSet using kubectl as follows:

First, get your current state of pods. You have one pod in this scenario.

Since you created a deployment, you also get a ReplicaSet automatically created for you.

In order to autoscale, you can simply use the autoscale command with some attributes.

"Min" is the number of minimum pods.

"Max" is the number of maximum pods.

"Cpu-percent" is the trigger to create new pods. This tells the system, “If the CPU usage hits 10% across the cluster, create a new pod.” You are using a very small number here because you don’t really have a CPU intensive application.

result:

3.3 Basic Policies

- Resource quota: When several users or teams share a cluster with a fixed number of nodes, there is a concern that one team could use more than its fair share of resources. Resource quotas are a tool for administrators to address this concern. A resource quota, defined by a ResourceQuota object, provides constraints that limit aggregate resource consumption per namespace.

- LimitRange: a policy to constrain resource allocations (to pods or containers) in a namespace. Includes Compute Resources, Storage Request, Limit for a Resource in a Namespace, Request/Limit for Compute Resources.

Compute Resources types:

CPU: basic unit = core

Memory: basic unit = byte

Resource Limits:

spec.containers[].resources.limits.cpu

spec.containers[].resources.limits.memory

spec.containers[].resources.requests.cpu

API resource command:

spec.containers[].resources.requests.memoryKubernetes API server.

Limiting Pod Compute Resources (Compute Resource Quota)

A pod resource request/limit for a particular resource type is the sum of the resource requests/limits of that type for each container in the pod.

Resource Quota for Extended Resources

Take the GPU resource as an example, if the resource name is nvidia.com/gpu, and you want to limit the total number of GPUs requested in a namespace to 4, you can define a quota as follows:

requests.nvidia.com/gpu: 4

Limiting Storage Resources (Storage Resource Quota)

You can limit the total sum of storage resources that can be requested in a given namespace.

- requests.storage: Across all persistent volume claims, the sum of storage requests cannot exceed this value.

- persistentvolumeclaims: The total number of persistent volume claims that can exist in the namespace.

- <storage-class-name>.storageclass.storage.k8s.io/requests.storage: Across all persistent volume claims associated with the storage-class-name, the total number of persistent volume claims that can exist in the namespace.

- <storage-class-name>.storageclass.storage.k8s.io/persistentvolumeclaims: Across all persistent volume claims associated with the storage-class-name, the total number of persistent volume claims that can exist in the namespace.

Which of the following are compute resource types that can be limited, if desired, on each container within a pod?

CPU

Memory

Developers can limit which of the following resources on pods?

Storage resources within a namespace

Consumption of storage resources based on associated storage-class

Limiting Number of Objects (Object Count Quota)

Pod Security Policies

3.4 Probes

There are three types of handlers:

- ExecAction: Executes a specified command inside the container. The diagnostic is considered successful if the command exits with a status code of 0.

- TCPSocketAction: Performs a TCP check against the container’s IP address on a specified port. The diagnostic is considered successful if the port is open.

- HTTPGetAction: Performs an HTTP Get request against the container’s IP address on a specified port and path. The diagnostic is considered successful if the response has a status code greater than or equal to 200 and less than 400.

Each probe has one of three results:

- Success: The container passed the diagnostic.

- Failure: The container failed the diagnostic.

- Unknown: The diagnostic failed, so no action should be taken.

- Readiness Probe: Indicates whether the container is ready to service requests.

- Liveness Probe: Indicates whether the container is running.

- Startup Probes for Slow Starting Containers: Indicates whether the application within the container is started.

3.5 Rolling Updates

- Provide a way to rollout app changes in an automated and controlled fashion throughout your pods.

- Work with pod templates such a deployments.

- Allow for rollback if something goes wrong.

Current State

» kubectl get pods

NAME READY STATUS RESTARTS AGE

hello-kubernetes-55fd6f66c5-wv5tg 1/1 Running 0 5m51s

hello-kubernetes-55fd6f66c5-z6tn7 1/1 Running 0 6m33s

hello-kubernetes-55fd6f66c5-zwwqt 1/1 Running 0 5m51s

Changing Versions

// Configuration

var port = process.env.PORT || 8080;

var message = process.env.MESSAGE || "Hello world!";

// Configuration

var port = process.env.PORT || 8080;

var message = process.env.MESSAGE || "Hello world v2!";

3.6 ConfigMaps and Secrets

It’s a good practice not to hard code configuration variables in code. Keep them separate so that any changes in configuration does not require code changes. Examples of these variables may include non sensitive information like environments (dev, test, prod) or sensitive information like API keys and account IDs.

ConfigMaps provide one way to provide this configuration data to pods and deployments so that they are not hardcoded inside the application code.

ConfigMaps can be created in a few different ways:

- Using string literals.

- Using an existing properties or ”key”=“value” files.

- Providing a ConfigMap YAML descriptor file. Both the first and second ways can help us create such a YAML file.

3.7 Persistent Volumes and Persistent Volume Claims

3.8 Service Binding

- Service binding allows you to bind an IBM Cloud service instance to a Kubernetes cluster.

- The service credentials are available as secrets to any application deployed on the cluster.

- By mounting the Kubernetes secret as a volume to your deployment, you make the IBM Cloud(R) Identity and Access Management (IAM) API key available to the container that runs in your pod.

- A JSON file called binding is created in the volume mount directory.

Kubernetes LAB

1.check if kubectl is installed

check Kubernete's version by command "kubectl version"

or "kubectl version --output=json"

or "kubectl version --output=yaml"

2.To deploy a pod, saved the below as file.yaml, put it under certain working directory

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

-name:nginx

image:nginx:1.7.9

ports:

-containerPort:80cd to that directory and run, not working

3.install minikube

https://minikube.sigs.k8s.io/...

4.check if minikube is installed

5.start minikube

6.start minikube dashboard

Before you run minikube dashboard, you should open a new terminal, start minikube dashboard there, and then switch back to the main terminal.

not working

7.restart laptop and try again

(run minikube dashboard in the same command terminal also works)

open a url automatically. navigate to cluster:

8.access your cluster by "kubectl get po -A"

9.try step 2 again

modify the indentation in the yaml file as below, based on https://stackoverflow.com/que...

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort:80rerun the command

still not working, modify the format as below, based on https://stackoverflow.com/que...

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 80now it works

10.However, we are seeing status=CrashLoopBackOff, and check the dashboard:

it's error. check the reason

seeing "ContainersNotReady"

go back to our yaml file, we are specifying containers image as nginx:1.7.9 (nginx is a open source web server), but we don't have it ready.

11.install nginx

docker container run -d --name nginx01 nginx

12.modify container info in yaml file as below

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx01

image: nginx:1.23.0

ports:

- containerPort: 80delete the old pod "kubectl delete pod nginx" and recreate "kubectl apply -f file.yaml"

The dashboard showing "pending" as creating container. Wait for a min. Now it works.

13.We have created the Pod. Let's create a deployment

Save the below as nginx.yaml under certain working directory.

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx01

image: nginx:1.23.0

ports:

- containerPort: 80run "kubectl apply -f nginx.yaml"

14.Let's create another deployment

save the below as deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-kubernetes

spec:

selector:

matchLabels:

app: hello-kubernetes

template:

metadata:

labels:

app: hello-kubernetes

spec:

containers:

- name: hello-kubernetes

image: paulbouwer/hello-kubernetes:1.5

ports:

- containerPort: 8080run the below commands

kubectl apply -f deployment.yaml

kubectl get deployment

kubectl get pods

kubectl get replicaset or kubectl get rs15.use the expose command to get a public URL for the deployment

exposing the service associated with this deployment

kubectl expose deployment hello-kubernetes --type=NodePort --port=80 --target-port=8080 --name=hello-kubernetesget the public IP of your cluster itself

kubectl get node -o wideget the exposed port from the service itself by asking for the details in JSON format and plucking the nodePort property

kubectl get service hello-kubernetes -o jsonpath="{.spec.ports[0].nodePort}{'\n'}"

30610

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。