介绍

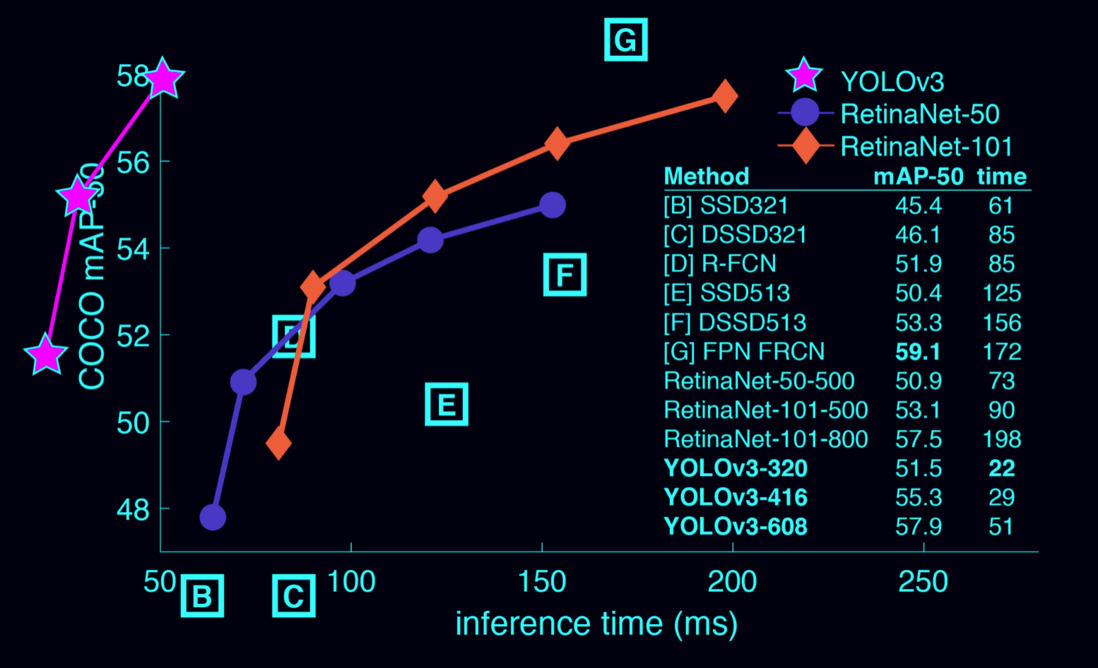

YOLO是基于深度学习端到端的实时目标检测系统,YOLO将目标区域预测和目标类别预测整合于单个神经网络模型中,实现在准确率较高的情况下快速目标检测与识别,更加适合现场应用环境。本案例,我们快速实现一个视频目标检测功能,实现的具体原理我们将在单独的文章中详细介绍。

下载编译

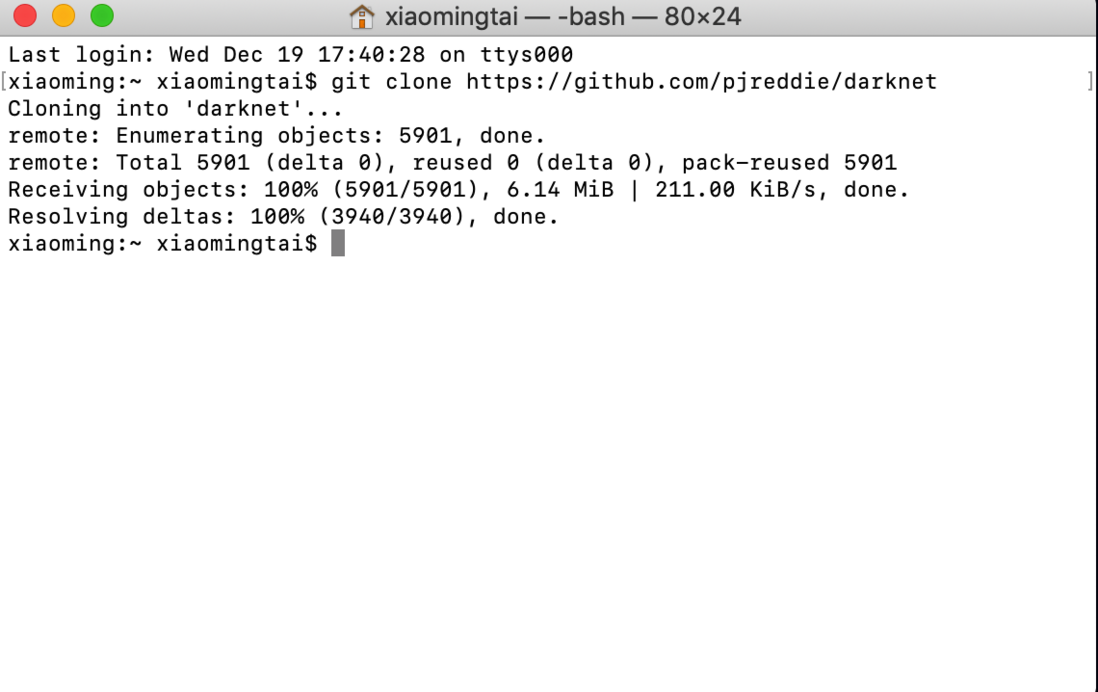

我们首先下载Darknet开发框架,Darknet开发框架是YOLO大神级作者自己用C语言编写的开发框架,支持GPU加速,有两种下载方式:

- 下载Darknet压缩包

git clone https://github.com/pjreddie/darknet

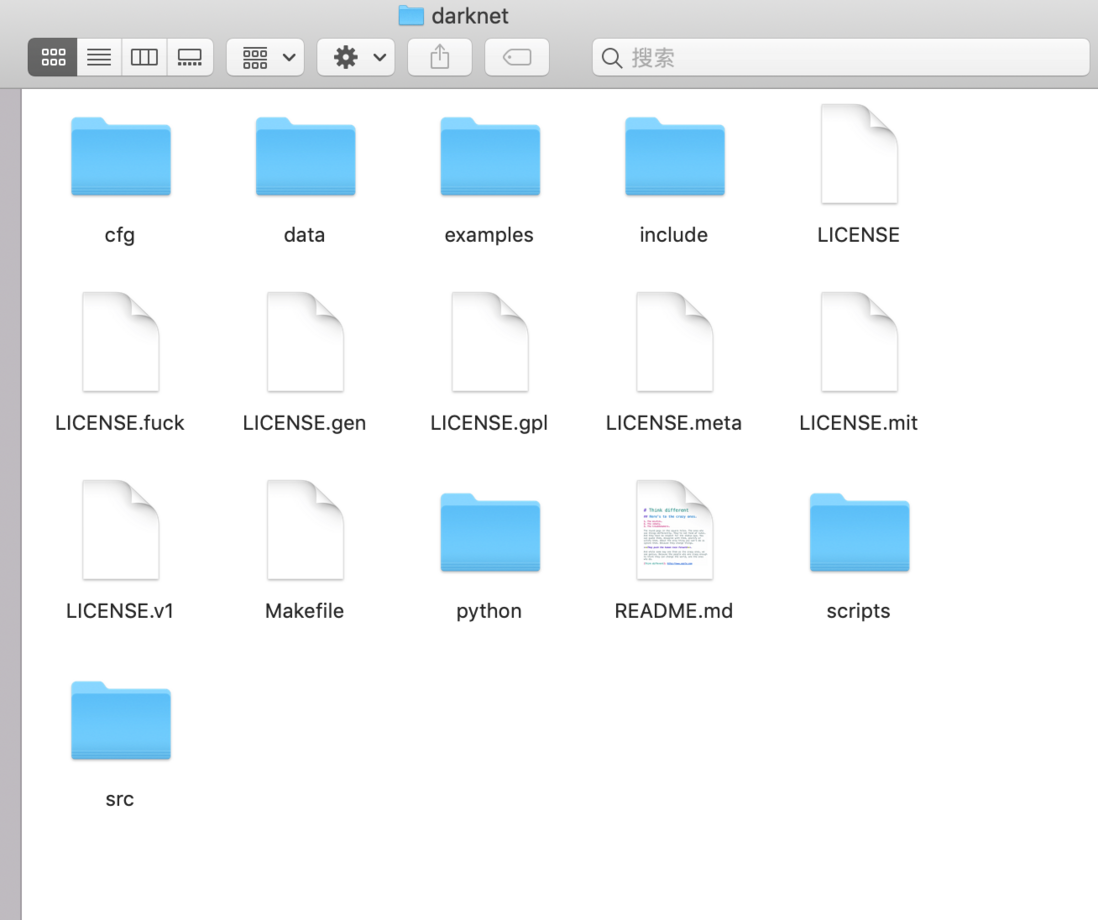

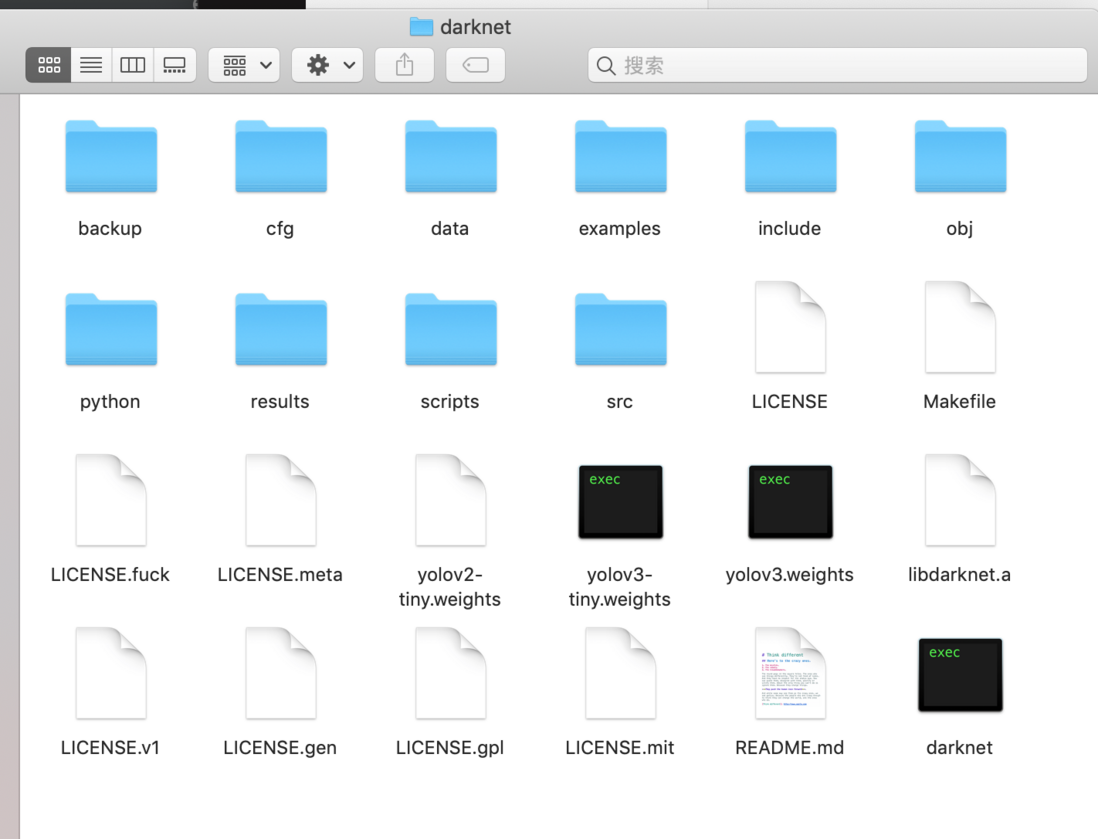

下载后,完整的文件内容,如下图所示:

编译:

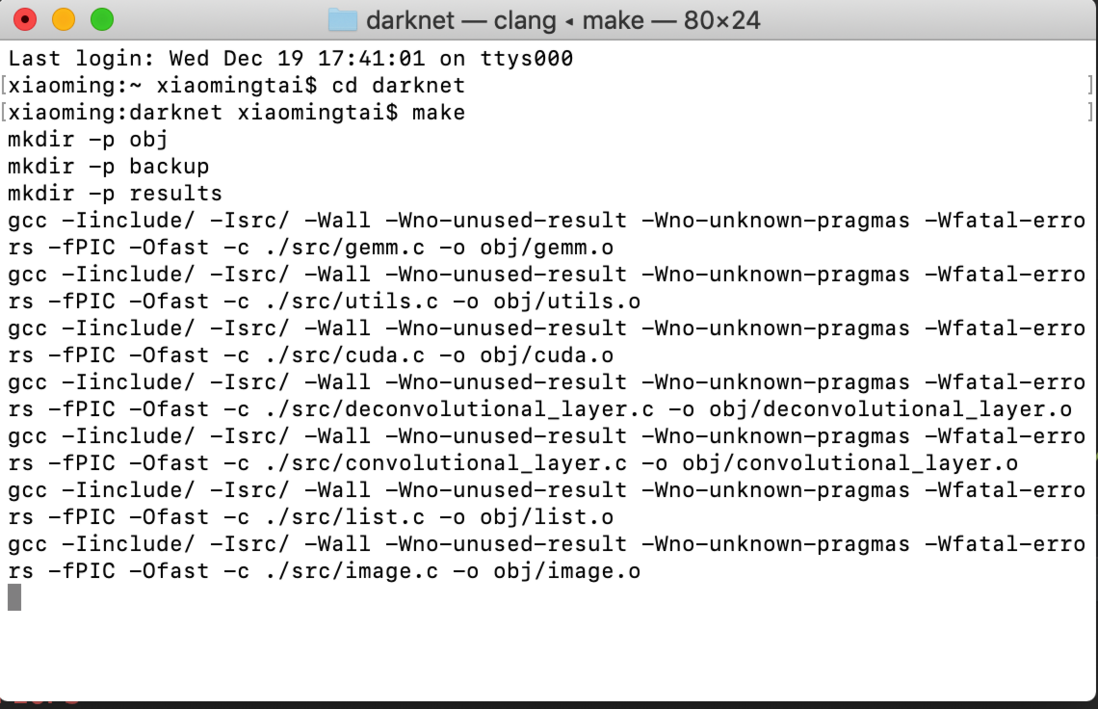

cd darknet

# 编译

make编译后的文件内容,如下图所示:

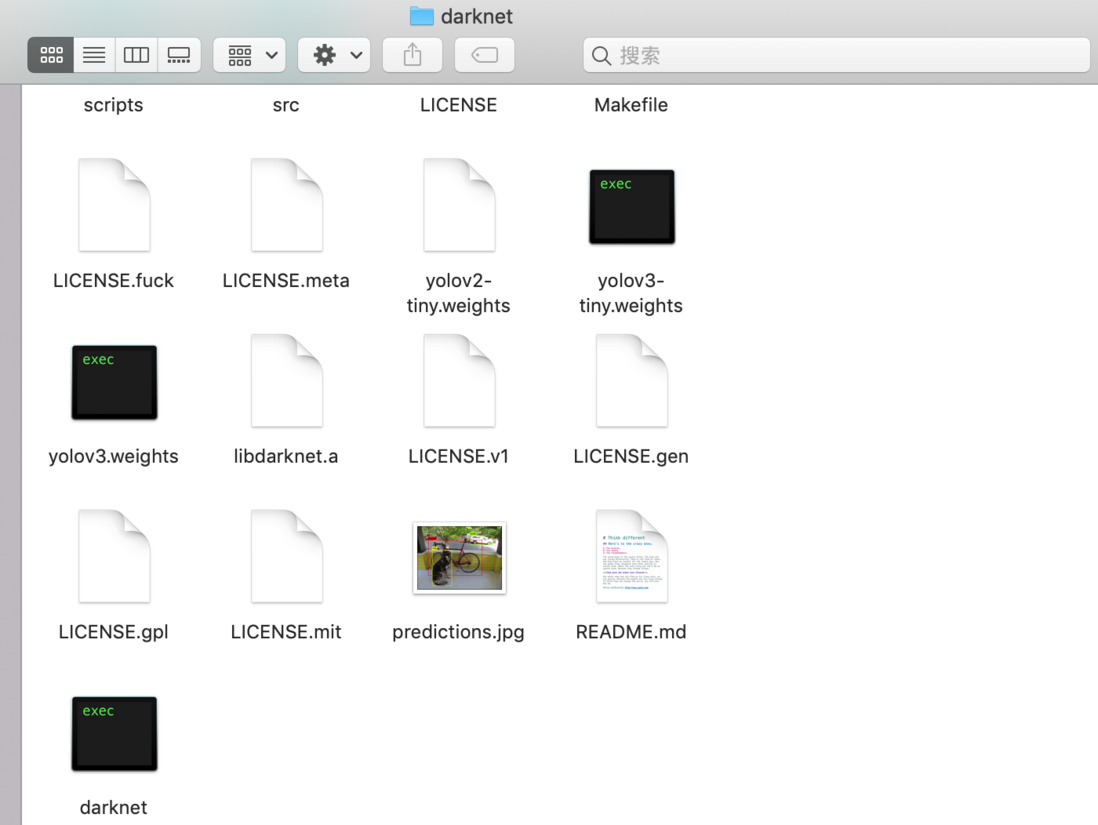

下载权重文件

我们这里下载的是“yolov3”版本,大小是200多M,“yolov3-tiny”比较小,30多M。

wget https://pjreddie.com/media/files/yolov3.weights下载权重文件后,文件内容如下图所示:

上图中的“yolov3-tiny.weights”,"yolov2-tiny.weights"是我单独另下载的。

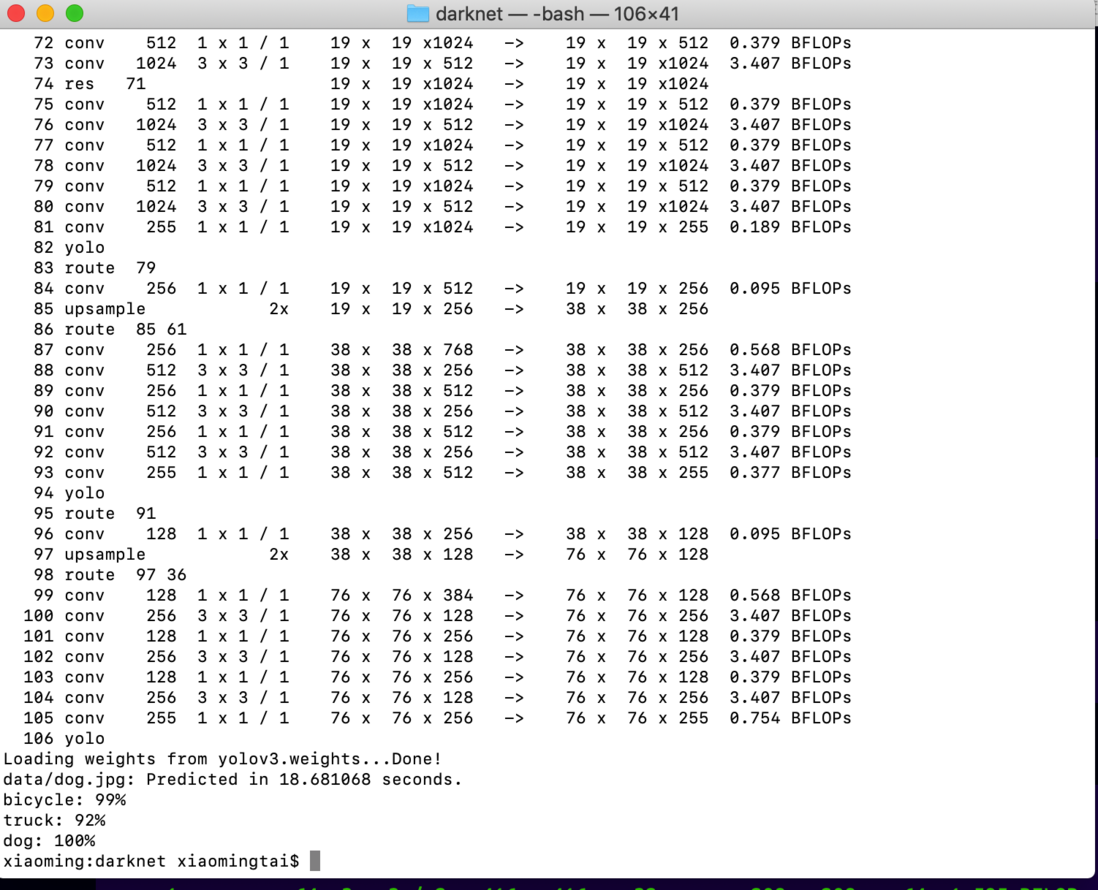

C语言预测

./darknet detect cfg/yolov3.cfg yolov3.weights data/dog.jpg如图所示,我们已经预测出三种类别以及对应的概率值。模型输出的照片位于darknet根目录,名字是“predictions.jpg”,如下图所示:

让我们打开模型输出照片看下:

Python语言预测

我们首先需要将“darknet”文件夹内的“libdarknet.so”文件移动到“darknet/python”内,完成后如下图所示:

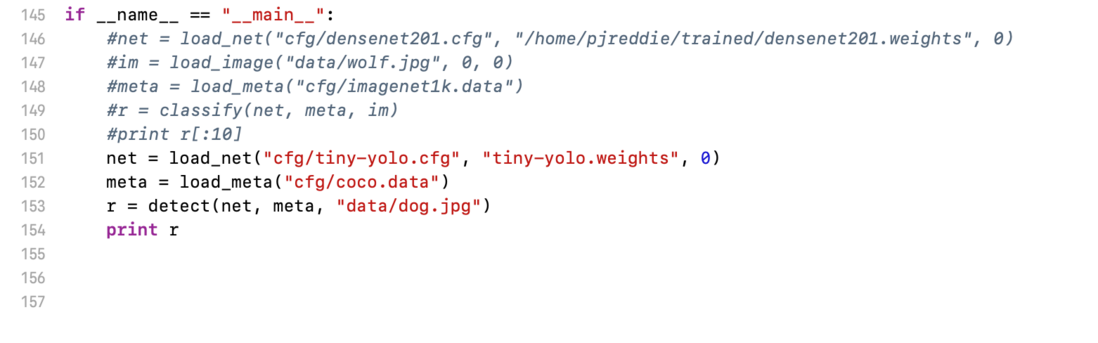

我们将使用Darknet内置的“darknet.py”,进行预测。预测之前,我们需要对文件进行修改:

- 默认py文件基于python2.0,所以对于python3.0及以上需要修改print

- 由于涉及到python和C之间的传值,所以字符串内容需要转码

- 使用绝对路径

修改完成后,如下图所示:

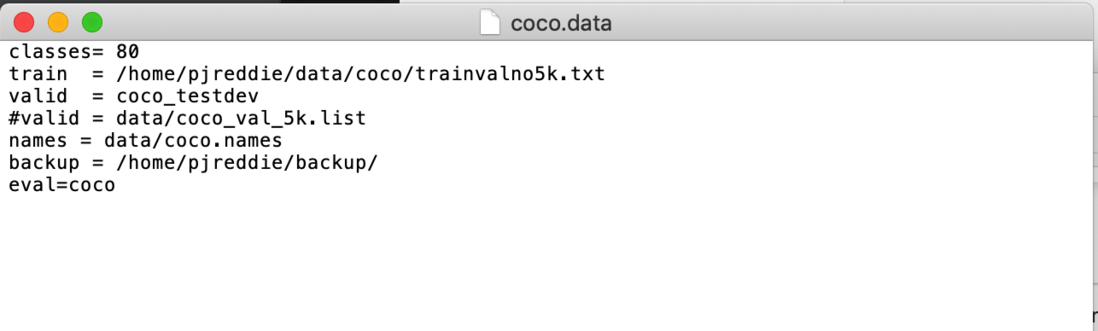

打开“darknet/cfg/coco.data”文件,将“names”也改为绝对路径(截图内没有修改,读者根据自己的实际路径修改):

我们可以开始预测了,首先进入“darknet/python”然后执行“darknet.py”文件即可:

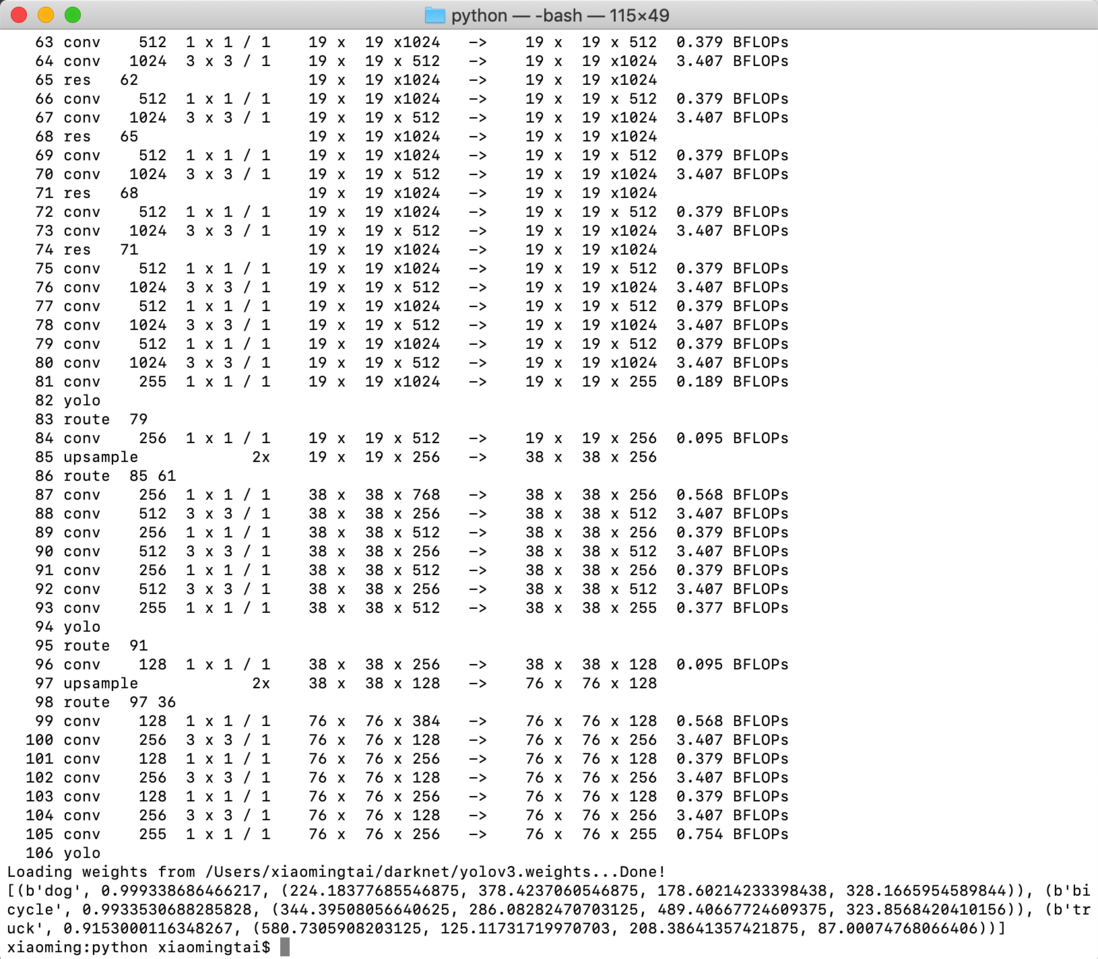

结果如下图所示:

对模型输出的结果做个简单的说明,如:

# 分别是:类别,识别概率,识别物体的X坐标,识别物体的Y坐标,识别物体的长度,识别物体的高度

(b'dog', 0.999338686466217, (224.18377685546875, 378.4237060546875, 178.60214233398438, 328.1665954589844)视频检测

from ctypes import *

import random

import cv2

import numpy as np

def sample(probs):

s = sum(probs)

probs = [a/s for a in probs]

r = random.uniform(0, 1)

for i in range(len(probs)):

r = r - probs[i]

if r <= 0:

return i

return len(probs)-1

def c_array(ctype, values):

arr = (ctype*len(values))()

arr[:] = values

return arr

class BOX(Structure):

_fields_ = [("x", c_float),

("y", c_float),

("w", c_float),

("h", c_float)]

class DETECTION(Structure):

_fields_ = [("bbox", BOX),

("classes", c_int),

("prob", POINTER(c_float)),

("mask", POINTER(c_float)),

("objectness", c_float),

("sort_class", c_int)]

class IMAGE(Structure):

_fields_ = [("w", c_int),

("h", c_int),

("c", c_int),

("data", POINTER(c_float))]

class METADATA(Structure):

_fields_ = [("classes", c_int),

("names", POINTER(c_char_p))]

lib = CDLL("../python/libdarknet.so", RTLD_GLOBAL)

lib.network_width.argtypes = [c_void_p]

lib.network_width.restype = c_int

lib.network_height.argtypes = [c_void_p]

lib.network_height.restype = c_int

predict = lib.network_predict

predict.argtypes = [c_void_p, POINTER(c_float)]

predict.restype = POINTER(c_float)

set_gpu = lib.cuda_set_device

set_gpu.argtypes = [c_int]

make_image = lib.make_image

make_image.argtypes = [c_int, c_int, c_int]

make_image.restype = IMAGE

get_network_boxes = lib.get_network_boxes

get_network_boxes.argtypes = [c_void_p, c_int, c_int, c_float, c_float, POINTER(c_int), c_int, POINTER(c_int)]

get_network_boxes.restype = POINTER(DETECTION)

make_network_boxes = lib.make_network_boxes

make_network_boxes.argtypes = [c_void_p]

make_network_boxes.restype = POINTER(DETECTION)

free_detections = lib.free_detections

free_detections.argtypes = [POINTER(DETECTION), c_int]

free_ptrs = lib.free_ptrs

free_ptrs.argtypes = [POINTER(c_void_p), c_int]

network_predict = lib.network_predict

network_predict.argtypes = [c_void_p, POINTER(c_float)]

reset_rnn = lib.reset_rnn

reset_rnn.argtypes = [c_void_p]

load_net = lib.load_network

load_net.argtypes = [c_char_p, c_char_p, c_int]

load_net.restype = c_void_p

do_nms_obj = lib.do_nms_obj

do_nms_obj.argtypes = [POINTER(DETECTION), c_int, c_int, c_float]

do_nms_sort = lib.do_nms_sort

do_nms_sort.argtypes = [POINTER(DETECTION), c_int, c_int, c_float]

free_image = lib.free_image

free_image.argtypes = [IMAGE]

letterbox_image = lib.letterbox_image

letterbox_image.argtypes = [IMAGE, c_int, c_int]

letterbox_image.restype = IMAGE

load_meta = lib.get_metadata

lib.get_metadata.argtypes = [c_char_p]

lib.get_metadata.restype = METADATA

load_image = lib.load_image_color

load_image.argtypes = [c_char_p, c_int, c_int]

load_image.restype = IMAGE

rgbgr_image = lib.rgbgr_image

rgbgr_image.argtypes = [IMAGE]

predict_image = lib.network_predict_image

predict_image.argtypes = [c_void_p, IMAGE]

predict_image.restype = POINTER(c_float)

def convertBack(x, y, w, h):

xmin = int(round(x - (w / 2)))

xmax = int(round(x + (w / 2)))

ymin = int(round(y - (h / 2)))

ymax = int(round(y + (h / 2)))

return xmin, ymin, xmax, ymax

def array_to_image(arr):

# need to return old values to avoid python freeing memory

arr = arr.transpose(2,0,1)

c, h, w = arr.shape[0:3]

arr = np.ascontiguousarray(arr.flat, dtype=np.float32) / 255.0

data = arr.ctypes.data_as(POINTER(c_float))

im = IMAGE(w,h,c,data)

return im, arr

def detect(net, meta, image, thresh=.5, hier_thresh=.5, nms=.45):

im, image = array_to_image(image)

rgbgr_image(im)

num = c_int(0)

pnum = pointer(num)

predict_image(net, im)

dets = get_network_boxes(net, im.w, im.h, thresh,

hier_thresh, None, 0, pnum)

num = pnum[0]

if nms: do_nms_obj(dets, num, meta.classes, nms)

res = []

for j in range(num):

a = dets[j].prob[0:meta.classes]

if any(a):

ai = np.array(a).nonzero()[0]

for i in ai:

b = dets[j].bbox

res.append((meta.names[i], dets[j].prob[i],

(b.x, b.y, b.w, b.h)))

res = sorted(res, key=lambda x: -x[1])

if isinstance(image, bytes): free_image(im)

free_detections(dets, num)

return res

if __name__ == "__main__":

cap = cv2.VideoCapture(0)

ret, img = cap.read()

fps = cap.get(cv2.CAP_PROP_FPS)

net = load_net(b"/Users/xiaomingtai/darknet/cfg/yolov2-tiny.cfg", b"/Users/xiaomingtai/darknet/yolov2-tiny.weights", 0)

meta = load_meta(b"/Users/xiaomingtai/darknet/cfg/coco.data")

cv2.namedWindow("img", cv2.WINDOW_NORMAL)

while(True):

ret, img = cap.read()

if ret:

r = detect(net, meta, img)

for i in r:

x, y, w, h = i[2][0], i[2][17], i[2][18], i[2][19]

xmin, ymin, xmax, ymax = convertBack(float(x), float(y), float(w), float(h))

pt1 = (xmin, ymin)

pt2 = (xmax, ymax)

cv2.rectangle(img, pt1, pt2, (0, 255, 0), 2)

cv2.putText(img, i[0].decode() + " [" + str(round(i[1] * 100, 2)) + "]", (pt1[0], pt1[1] + 20), cv2.FONT_HERSHEY_SIMPLEX, 1, [0, 255, 0], 4)

cv2.imshow("img", img)

if cv2.waitKey(1) & 0xFF == ord('q'):

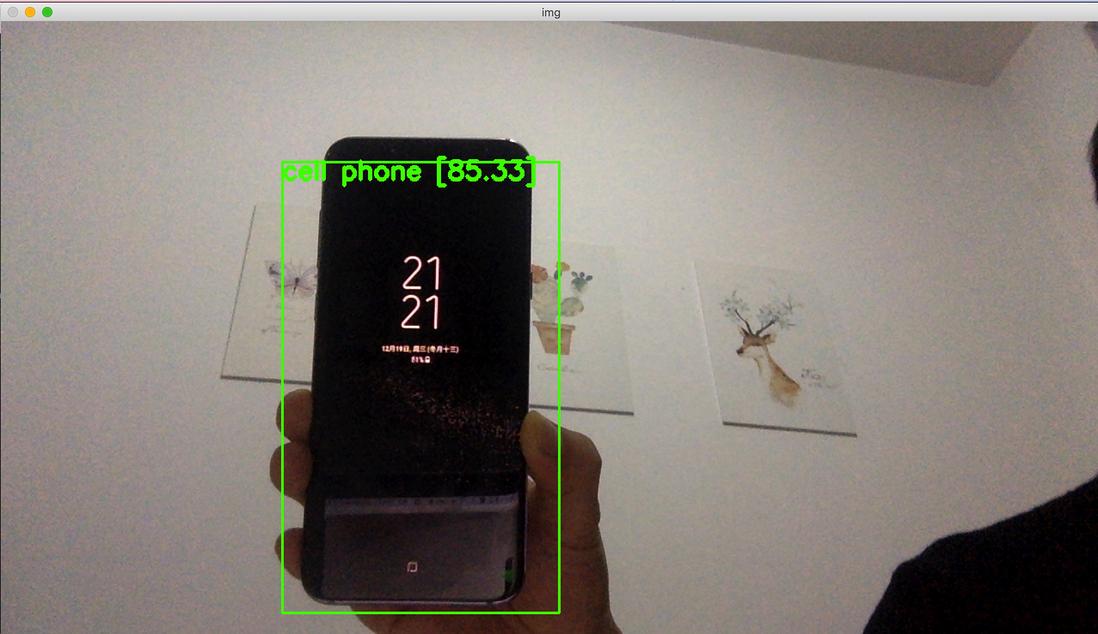

break模型输出结果:

模型视频检测结果:

没有GPU的条件下还是不要选择yolov3了,很慢。

总结

本篇文章主要是YOLO快速上手,我们通过很少的代码就能实现不错的目标检测。当然,想熟练掌握YOLO,理解背后的原理是十分必要的,下篇文章将会重点介绍YOLO原理。

**粗体** _斜体_ [链接](http://example.com) `代码` - 列表 > 引用。你还可以使用@来通知其他用户。