继承multiprocessing.Process实现了一个worker类,在父进程中,自己实现了一个最多启动N的限制(出问题的环境是30个)。

实际运行中发现,大约有万分之二(当前每天运行46000+次,大约出现11次)的概率,子进程创建后run方法未执行。

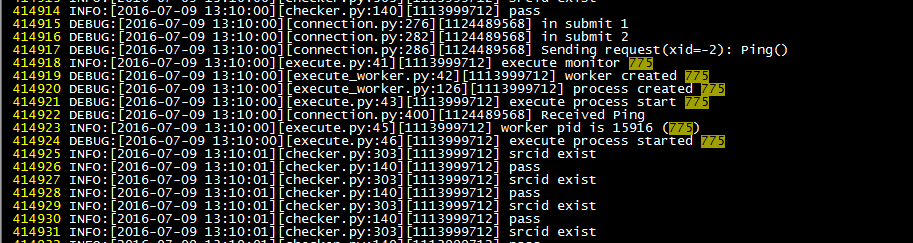

代码和日志如下,注意打印日志的语句

父进程启动子进程(父进程里还有一个控制并发进程数量的逻辑,如果需要的话我贴出来):

...

def run_task(self, task):

logging.info('execute monitor %s' % task['id'])

worker = execute_worker.ExecuteWorkerProcess(task)

logging.debug('execute process start %s' % task['id'])

worker.start()

logging.info('worker pid is %s (%s)' % (worker.pid, task['id']))

logging.debug('execute process started %s' % task['id'])

self.worker_pool.append(worker)

...子进程run方法

class ExecuteWorkerProcess(multiprocessing.Process):

...

def __init__(self, task):

super(ExecuteWorkerProcess, self).__init__()

self.stopping = False

self.task = task

self.worker = ExecuteWorker(task)

if 'task' in task:

self.routine = False

else:

self.routine = True

self.zk = None

logging.debug('process created %s' % self.task['id'])

...

def run(self):

logging.debug('process start %s' % self.task['id'])

try:

logging.debug('process run before %s' % self.task['id'])

self._run()

logging.debug('process run after %s' % self.task['id'])

except:

logging.exception('')

title = u'监控执行进程报错'

text = u'监控项id:%s\n错误信息:\n%s' % (self.task['id'], traceback.format_exc())

warning.email_warning(title, text, to_dev=True)

logging.debug('process start done %s' % self.task['id'])

...出现问题的进程日志如下:

正常任务日志如下:

可以看到正常和异常的日志主进程中都打印除了子进程的pid,但是异常继承子进程run行数的第一行没有执行。

是否有人遇到过?这个是不是multiprocessing.Process的坑,有没有规避办法...

原因已找到,由于主进程中使用了thread+mutiprocessing(fork),导致logging出现死锁,现象就是遇到子进程里第一句logging就hang住。问题只会发生在Linux下。

看了stckoverflow这个答案找到的复现方法,另一个回答,解决方案

复现demo:

复现完了记得清掉hang住的进程....