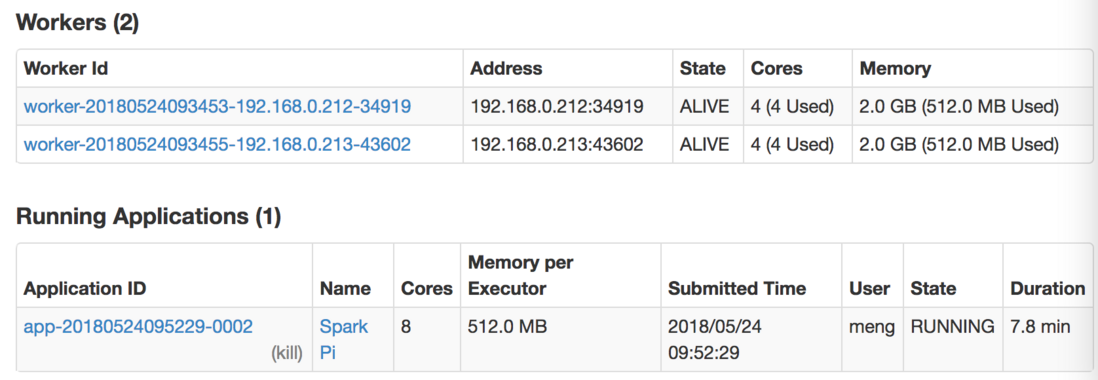

我在本地使用IntelliJ IDEA进行spark开发,在提交到集群运行时报错。搜索一番之后,所有的答案都指向CPU/内存资源不足,但我已经设置了足够的CPU/内存资源,任务的state也是running的,看起来一切正常:

MasterIP的设置也正确,本地IDE编译也没有问题,但通过本地IDE提交到集群上运行的时候就会报出该WARNING,完整的信息:

`2018-05-24 09:52:27 INFO SparkContext:54 - Running Spark version 2.3.0

2018-05-24 09:52:28 WARN NativeCodeLoader:62 - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2018-05-24 09:52:28 INFO SparkContext:54 - Submitted application: Spark Pi

2018-05-24 09:52:28 INFO SecurityManager:54 - Changing view acls to: meng

2018-05-24 09:52:28 INFO SecurityManager:54 - Changing modify acls to: meng

2018-05-24 09:52:28 INFO SecurityManager:54 - Changing view acls groups to:

2018-05-24 09:52:28 INFO SecurityManager:54 - Changing modify acls groups to:

2018-05-24 09:52:28 INFO SecurityManager:54 - SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(meng); groups with view permissions: Set(); users with modify permissions: Set(meng); groups with modify permissions: Set()

2018-05-24 09:52:28 INFO Utils:54 - Successfully started service 'sparkDriver' on port 57174.

2018-05-24 09:52:28 INFO SparkEnv:54 - Registering MapOutputTracker

2018-05-24 09:52:28 INFO SparkEnv:54 - Registering BlockManagerMaster

2018-05-24 09:52:28 INFO BlockManagerMasterEndpoint:54 - Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

2018-05-24 09:52:28 INFO BlockManagerMasterEndpoint:54 - BlockManagerMasterEndpoint up

2018-05-24 09:52:28 INFO DiskBlockManager:54 - Created local directory at /private/var/folders/8v/2ltw861925785r_dw26d6y0h0000gn/T/blockmgr-e9a51357-7203-4015-b1e4-2aca46af05b1

2018-05-24 09:52:28 INFO MemoryStore:54 - MemoryStore started with capacity 912.3 MB

2018-05-24 09:52:28 INFO SparkEnv:54 - Registering OutputCommitCoordinator

2018-05-24 09:52:28 INFO log:192 - Logging initialized @2504ms

2018-05-24 09:52:28 INFO Server:346 - jetty-9.3.z-SNAPSHOT

2018-05-24 09:52:28 INFO Server:414 - Started @2607ms

2018-05-24 09:52:28 INFO AbstractConnector:278 - Started ServerConnector@1c8a918a{HTTP/1.1,[http/1.1]}{0.0.0.0:4040}

2018-05-24 09:52:28 INFO Utils:54 - Successfully started service 'SparkUI' on port 4040.

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@2f94c4db{/jobs,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@7859e786{/jobs/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@285d851a{/jobs/job,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@664a9613{/jobs/job/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@5118388b{/stages,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@15a902e7{/stages/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@7876d598{/stages/stage,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@71104a4{/stages/stage/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@4985cbcb{/stages/pool,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@72f46e16{/stages/pool/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@3c9168dc{/storage,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@332a7fce{/storage/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@549621f3{/storage/rdd,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@54361a9{/storage/rdd/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@32232e55{/environment,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@5217f3d0{/environment/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@37ebc9d8{/executors,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@293bb8a5{/executors/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@2416a51{/executors/threadDump,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@6fa590ba{/executors/threadDump/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@6e9319f{/static,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@8a589a2{/,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@c65a5ef{/api,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@ec0c838{/jobs/job/kill,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@6e46d9f4{/stages/stage/kill,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO SparkUI:54 - Bound SparkUI to 0.0.0.0, and started at http://10.200.44.183:4040

2018-05-24 09:52:29 INFO SparkContext:54 - Added JAR /Users/meng/Documents/untitled/out/artifacts/untitled_jar/untitled.jar at spark://10.200.44.183:57174/jars/untitled.jar with timestamp 1527126749237

2018-05-24 09:52:29 INFO StandaloneAppClient$ClientEndpoint:54 - Connecting to master spark://myz-master:7077...

2018-05-24 09:52:29 INFO TransportClientFactory:267 - Successfully created connection to myz-master/210.14.69.105:7077 after 43 ms (0 ms spent in bootstraps)

2018-05-24 09:52:29 INFO StandaloneSchedulerBackend:54 - Connected to Spark cluster with app ID app-20180524095229-0002

2018-05-24 09:52:29 INFO StandaloneAppClient$ClientEndpoint:54 - Executor added: app-20180524095229-0002/0 on worker-20180524093453-192.168.0.212-34919 (192.168.0.212:34919) with 4 core(s)

2018-05-24 09:52:29 INFO StandaloneSchedulerBackend:54 - Granted executor ID app-20180524095229-0002/0 on hostPort 192.168.0.212:34919 with 4 core(s), 512.0 MB RAM

2018-05-24 09:52:29 INFO StandaloneAppClient$ClientEndpoint:54 - Executor added: app-20180524095229-0002/1 on worker-20180524093455-192.168.0.213-43602 (192.168.0.213:43602) with 4 core(s)

2018-05-24 09:52:29 INFO StandaloneSchedulerBackend:54 - Granted executor ID app-20180524095229-0002/1 on hostPort 192.168.0.213:43602 with 4 core(s), 512.0 MB RAM

2018-05-24 09:52:29 INFO Utils:54 - Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 57176.

2018-05-24 09:52:29 INFO NettyBlockTransferService:54 - Server created on 10.200.44.183:57176

2018-05-24 09:52:29 INFO BlockManager:54 - Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

2018-05-24 09:52:29 INFO BlockManagerMaster:54 - Registering BlockManager BlockManagerId(driver, 10.200.44.183, 57176, None)

2018-05-24 09:52:29 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/0 is now RUNNING

2018-05-24 09:52:29 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/1 is now RUNNING

2018-05-24 09:52:29 INFO BlockManagerMasterEndpoint:54 - Registering block manager 10.200.44.183:57176 with 912.3 MB RAM, BlockManagerId(driver, 10.200.44.183, 57176, None)

2018-05-24 09:52:29 INFO BlockManagerMaster:54 - Registered BlockManager BlockManagerId(driver, 10.200.44.183, 57176, None)

2018-05-24 09:52:29 INFO BlockManager:54 - Initialized BlockManager: BlockManagerId(driver, 10.200.44.183, 57176, None)

2018-05-24 09:52:29 INFO ContextHandler:781 - Started o.s.j.s.ServletContextHandler@5aa6202e{/metrics/json,null,AVAILABLE,@Spark}

2018-05-24 09:52:29 INFO StandaloneSchedulerBackend:54 - SchedulerBackend is ready for scheduling beginning after reached minRegisteredResourcesRatio: 0.0

2018-05-24 09:52:30 INFO SparkContext:54 - Starting job: reduce at SparkPi.scala:26

2018-05-24 09:52:30 INFO DAGScheduler:54 - Got job 0 (reduce at SparkPi.scala:26) with 2 output partitions

2018-05-24 09:52:30 INFO DAGScheduler:54 - Final stage: ResultStage 0 (reduce at SparkPi.scala:26)

2018-05-24 09:52:30 INFO DAGScheduler:54 - Parents of final stage: List()

2018-05-24 09:52:30 INFO DAGScheduler:54 - Missing parents: List()

2018-05-24 09:52:30 INFO DAGScheduler:54 - Submitting ResultStage 0 (MapPartitionsRDD[1] at map at SparkPi.scala:21), which has no missing parents

2018-05-24 09:52:30 INFO MemoryStore:54 - Block broadcast_0 stored as values in memory (estimated size 1776.0 B, free 912.3 MB)

2018-05-24 09:52:30 INFO MemoryStore:54 - Block broadcast_0_piece0 stored as bytes in memory (estimated size 1169.0 B, free 912.3 MB)

2018-05-24 09:52:30 INFO BlockManagerInfo:54 - Added broadcast_0_piece0 in memory on 10.200.44.183:57176 (size: 1169.0 B, free: 912.3 MB)

2018-05-24 09:52:30 INFO SparkContext:54 - Created broadcast 0 from broadcast at DAGScheduler.scala:1039

2018-05-24 09:52:30 INFO DAGScheduler:54 - Submitting 2 missing tasks from ResultStage 0 (MapPartitionsRDD[1] at map at SparkPi.scala:21) (first 15 tasks are for partitions Vector(0, 1))

2018-05-24 09:52:30 INFO TaskSchedulerImpl:54 - Adding task set 0.0 with 2 tasks

**2018-05-24 09:52:45 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources**

2018-05-24 09:53:00 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:53:15 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:53:30 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:53:45 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:54:00 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:54:15 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:54:30 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources

2018-05-24 09:54:30 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/0 is now EXITED (Command exited with code 1)

2018-05-24 09:54:30 INFO StandaloneSchedulerBackend:54 - Executor app-20180524095229-0002/0 removed: Command exited with code 1

2018-05-24 09:54:30 INFO StandaloneAppClient$ClientEndpoint:54 - Executor added: app-20180524095229-0002/2 on worker-20180524093453-192.168.0.212-34919 (192.168.0.212:34919) with 4 core(s)

2018-05-24 09:54:30 INFO StandaloneSchedulerBackend:54 - Granted executor ID app-20180524095229-0002/2 on hostPort 192.168.0.212:34919 with 4 core(s), 512.0 MB RAM

2018-05-24 09:54:30 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/2 is now RUNNING

2018-05-24 09:54:31 INFO BlockManagerMaster:54 - Removal of executor 0 requested

2018-05-24 09:54:31 INFO CoarseGrainedSchedulerBackend$DriverEndpoint:54 - Asked to remove non-existent executor 0

2018-05-24 09:54:31 INFO BlockManagerMasterEndpoint:54 - Trying to remove executor 0 from BlockManagerMaster.

2018-05-24 09:54:31 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/1 is now EXITED (Command exited with code 1)

2018-05-24 09:54:31 INFO StandaloneSchedulerBackend:54 - Executor app-20180524095229-0002/1 removed: Command exited with code 1

2018-05-24 09:54:31 INFO BlockManagerMasterEndpoint:54 - Trying to remove executor 1 from BlockManagerMaster.

2018-05-24 09:54:31 INFO BlockManagerMaster:54 - Removal of executor 1 requested

2018-05-24 09:54:31 INFO StandaloneAppClient$ClientEndpoint:54 - Executor added: app-20180524095229-0002/3 on worker-20180524093455-192.168.0.213-43602 (192.168.0.213:43602) with 4 core(s)

2018-05-24 09:54:31 INFO StandaloneSchedulerBackend:54 - Granted executor ID app-20180524095229-0002/3 on hostPort 192.168.0.213:43602 with 4 core(s), 512.0 MB RAM

2018-05-24 09:54:31 INFO CoarseGrainedSchedulerBackend$DriverEndpoint:54 - Asked to remove non-existent executor 1

2018-05-24 09:54:31 INFO StandaloneAppClient$ClientEndpoint:54 - Executor updated: app-20180524095229-0002/3 is now RUNNING

**2018-05-24 09:54:45 WARN TaskSchedulerImpl:66 - Initial job has not accepted any resources; check your cluster UI to ensure that workers are registered and have sufficient resources**之后会反复输出该WANRING信息

我的Scala代码:

import scala.math.random

import org.apache.spark.{SparkConf, SparkContext}

/**

* Created by meng on 2018/5/21.

*/

object SparkPi {

def main(args: Array[String]) {

val conf = new SparkConf()

.setAppName("Spark Pi")

.setMaster("spark://210.14.69.105:7077")

.setJars(List("/Users/meng/Documents/untitled/out/artifacts/untitled_jar/untitled.jar"))

.set("spark.executor.memory", "512M")

val spark = new SparkContext(conf)

val slices = if (args.length > 0) args(0).toInt else 2

val n = 100000 * slices

val count = spark

.parallelize(1 to n, slices)

.map {

i => val x = random * 2 - 1

val y = random * 2 - 1

if (x * x + y * y < 1) 1 else 0

}

.reduce(_ + _)

println("Pi is roughly " + 4.0 * count / n)

spark.stop()

}

}求助求助,万分感谢。

你这个问题解决了吗啊